One of the persistent hurdles for autonomous and advanced driver-assistance systems (ADAS) is incomplete situational awareness. Cameras and lidar can struggle in low visibility, and even onboard radar isn’t immune to blind spots caused by occlusion from other vehicles or infrastructure. A research team at Rice University is tackling this limitation not by changing how cars sense, but by augmenting where sensing happens.

The technology, called EyeDAR, consists of low-power millimeter-wave radar sensors that can be mounted on roadside infrastructure such as streetlights or traffic signals. These sensors act as external complements to vehicle sensors, detecting reflections that the vehicle’s own radar might miss and feeding direction-of-arrival information back into the vehicle’s perception stack.

Why In-Vehicle Sensors Don’t Always See Everything

Automated systems today rely on a suite of onboard sensors: cameras for visual context, lidar for high-resolution depth maps, and radar for motion and range detection.

Each technology has strengths, but also blind spots:

-

Cameras require good lighting and are susceptible to glare or shadows.

-

Lidar provides detailed point clouds but can be hampered by weather conditions like fog or rain.

- Onboard radar handles poor weather well, but the physics of signal reflection and vehicle geometry means radar signals can scatter away from the vehicle before returning usable data.

That means pedestrians stepping out from behind a truck, cyclists approaching from an angle outside the vehicle’s radar field, or vehicles emerging at an intersection can all escape reliable detection until too late. Roadside sensors offer a strategic fix by filling in visibility gaps that onboard systems can’t cover because of range, occlusion, or vehicle body blockage.

Leveraging Roadside Infrastructure for Perception

EyeDAR is roughly the size of an orange and designed to be inexpensive and low power. Placed at fixed locations such as traffic lights and intersections, it extends the sensing perimeter for vehicles passing through. Because these sensors are not constrained by the position or orientation of a specific vehicle, they can capture reflections that a car’s own radar might never see.

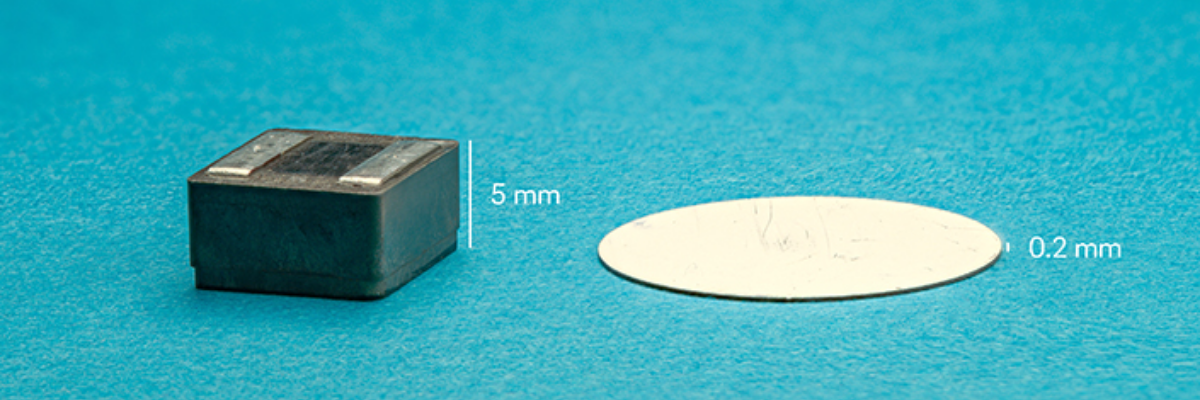

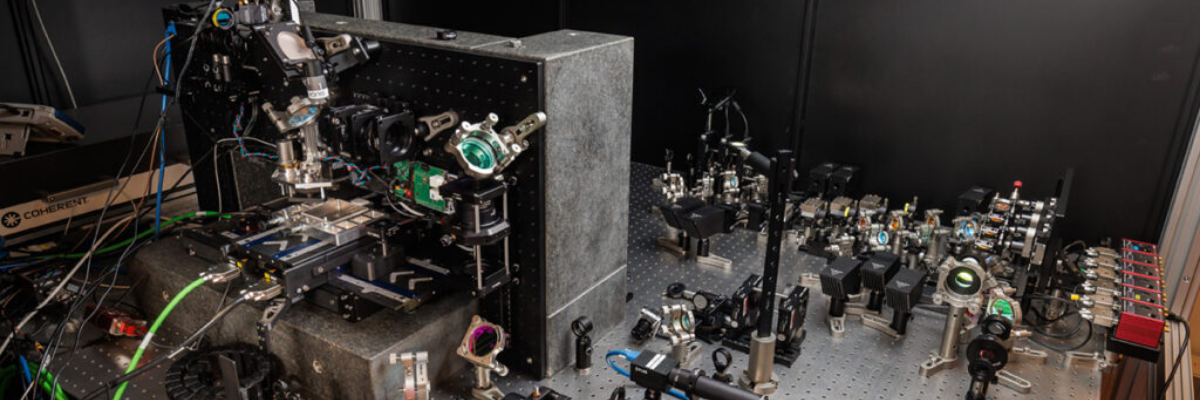

Structurally, EyeDAR combines a 3D-printed Luneberg lens — similar in concept to a human eye’s lens — with an antenna array that functions like a retina. The lens’s internal structure focuses incoming radar energy from any direction onto the antenna array, allowing the device to infer the direction of the reflected signal without heavy signal processing.

This physical approach to direction finding does much of the computational work in hardware, rather than in software. In test scenarios, the system resolved target directions more than 200 times faster than traditional radar designs that rely on digital angle estimation.

Sensing and Communicating in One Device

A noteworthy aspect of EyeDAR is that it doesn’t transmit its own radar pulses. Instead, it alternates between absorbing incoming radar waves from a passing vehicle and reflecting them back in a controlled way that encodes information as a sequence of bits — essentially communicating sensor data back to the vehicle using the original radar signal. This clever design lets EyeDAR provide additional context without adding new emissions or requiring a separate communications link.

The design approach also keeps power consumption low and makes widespread deployment more feasible. For autonomous systems operating in dense urban settings — where blind spots around intersections and congested traffic present significant safety concerns — increasing the density of roadside sensors could materially improve perception fidelity.

Broader Implications for Infrastructure and Automation

EyeDAR’s potential isn’t limited to self-driving cars. The concept could be extended to robotic platforms, drones, and wearable sensors, creating a mesh of roadside or environmental “radar beacons” that provide spatial awareness far beyond what any individual platform could achieve on its own.

Networks of such sensors could share data with each other as well as with vehicles, blurring the boundary between vehicle-centric perception and infrastructure-assisted situational awareness.

Researchers like Kun Woo Cho, who leads the EyeDAR project, frame this work as part of a broader push toward what she calls “analog computing” — taking advantage of physical sensor structures to handle tasks that would otherwise burden software and digital processing.

By offloading direction resolution into the hardware itself and then communicating that information through simple encoded reflections, EyeDAR sidesteps the need for complex angle-finding algorithms that can tax in-vehicle processors.

What This Means for Designers and Deployers

For engineers working on autonomous and assisted driving systems, the key takeaway is that perception no longer has to reside entirely within the vehicle. Roadside infrastructure — if equipped with the right sensors — can become an active partner in environment sensing, reducing blind spots and giving automated systems a richer set of inputs on which to base decisions.

Transport planners and system designers should watch this space as research transitions into field trials. The concept of augmenting onboard sensing with roadside radar meshes could reshape how we think about sensor placement, communication protocols, and the division of perception tasks between vehicle and infrastructure.

In the near term, technologies like EyeDAR invite reconsideration of where perception computing should live and how physical sensor design can complement digital intelligence in real-world autonomous systems.