Data centers are hitting a thermal wall. Racks that once drew 5 to 10 kilowatts now commonly exceed 50 kW, with some hyperscale and HPC deployments pushing past 100 kW. Air-based cooling systems designed for lower densities can’t keep up. The result is rising operating costs, more frequent thermal throttling, and growing pressure on local utilities.

Where the Problem Is Showing Up

-

Northern Virginia, the world’s largest data center hub, has seen cooling demand contribute to grid strain, forcing utilities to plan costly upgrades.

-

Singapore froze new data center construction until operators could meet stricter efficiency standards, in part because of cooling challenges in its hot, humid climate.

-

Microsoft’s Dublin campus relies on water-based cooling, which has triggered debate about sustainability and local resource use.

-

Meta and Google are retrofitting liquid cooling into facilities originally built for air, an acknowledgment that airflow alone is no longer enough.

Cooling Technologies on the Table

Operators are moving toward methods that put the coolant closer to the source of heat:

-

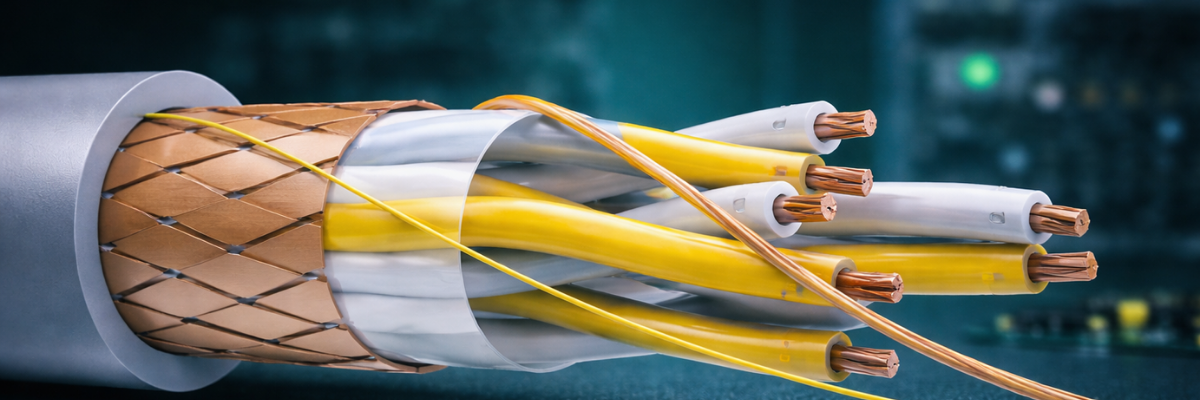

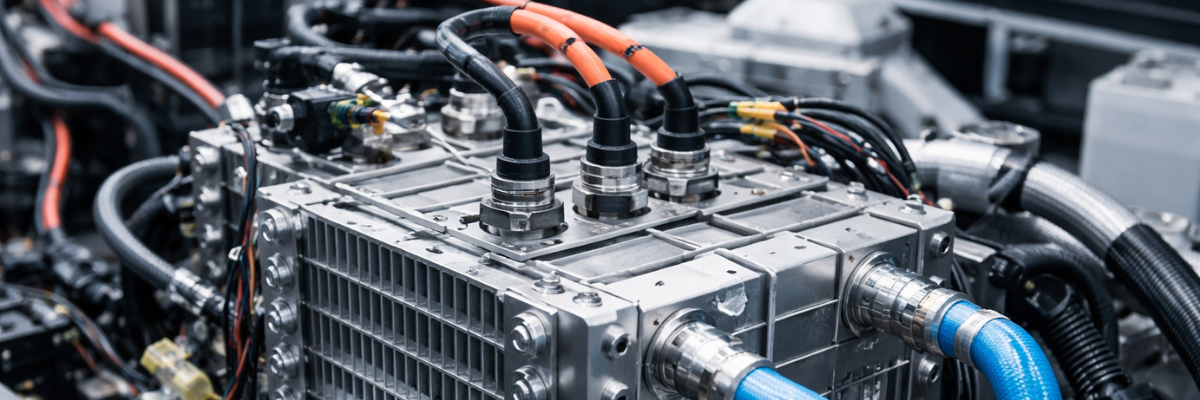

Direct liquid cooling with cold plates mounted on processors and GPUs.

-

Immersion cooling where entire servers are submerged in dielectric fluid. Vendors such as Submer and GRC are rolling out systems for racks above 80 kW.

-

Rear-door heat exchangers that retrofit onto racks, capturing and cooling exhaust air before it circulates.

-

Hybrid systems that mix liquid and air, helping facilities transition without a full rebuild.

Economics and Regulation

Cooling can consume 30 to 40 percent of a facility’s total power. In markets like Frankfurt and Amsterdam, where real estate is limited and governments enforce energy caps, that makes inefficient cooling not just costly but non-compliant. Regulators in the Netherlands now tie approvals for new data centers to demonstrated water and energy management. In Japan, some operators are experimenting with seawater cooling to offset demands on the grid.

Industry Response

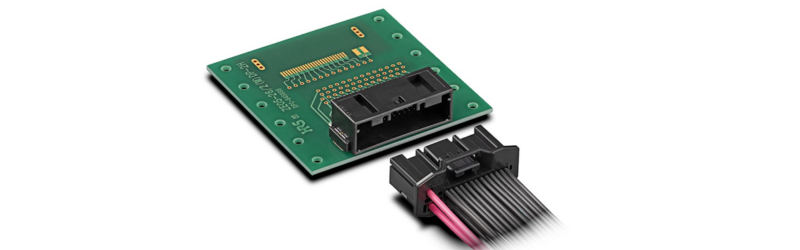

Chipmakers such as NVIDIA and AMD are designing processors with liquid cooling interfaces as standard. The Open Compute Project is pushing guidelines for liquid distribution and quick-connect fittings to ensure interoperability. Colocation operators are beginning to market immersion-ready suites to high-density tenants.

Outlook

The need for compute is not slowing, but the ability to cool it has become a defining constraint. Data centers that fail to adapt face higher costs, shorter equipment lifespans, and, in some regions, regulatory barriers. The future of digital infrastructure may depend less on how fast chips can process data than on how effectively facilities can move the heat out.