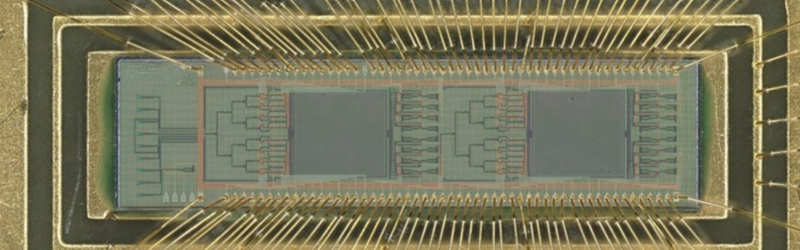

Artificial intelligence is everywhere, but it comes at a steep energy cost. Training and running today’s AI models can drain megawatts of power, raising questions about both scalability and sustainability. Researchers at the University of Florida think they’ve found a way forward: a silicon photonic chip that performs AI’s most power-hungry task with light instead of electricity.

Convolutions Without the Power Drain

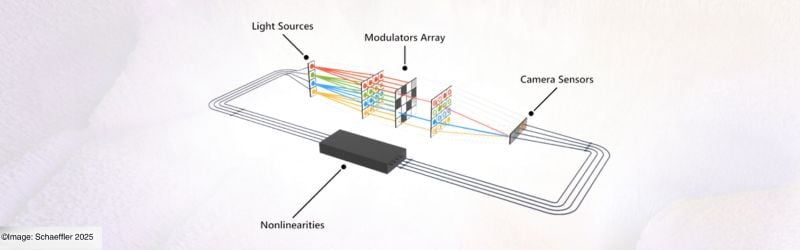

At the core of many AI systems is the convolution operation, a mathematical process that helps neural networks detect patterns in images, video, and text. Normally, convolutions require enormous amounts of digital computation. The Florida team tackled this by etching microscopic Fresnel lenses—flat, ultrathin optical elements—directly onto a silicon chip.

Here’s how it works:

-

Data is converted into laser light.

-

The light passes through the Fresnel lenses, which carry out the convolution optically.

-

The result is converted back into a digital signal for the neural network.

By shifting the computation into the optical domain, the process uses near-zero energy and completes much faster than conventional approaches.

Tested and Proven

In proof-of-concept tests, the chip achieved about 98% accuracy in classifying handwritten digits—essentially matching traditional electronic chips while consuming a fraction of the power. The team also demonstrated wavelength multiplexing, meaning multiple streams of data could be processed at once using different colors of laser light. That built-in parallelism is a distinct advantage of photonics.

Why It Matters for Engineers

-

Efficiency Gains: Potential for AI systems to become 100x more energy efficient.

-

Integration Potential: Built with standard semiconductor processes, easing adoption.

-

Scalability: Optical operations sidestep heat and resistance limits of electronics.

-

Parallelism: Multiple wavelengths allow simultaneous data processing.

“This is the first time anyone has put this type of optical computation on a chip and applied it to an AI neural network,” said Hangbo Yang, a research associate professor at UF.

Study leader Volker J. Sorger added, “Performing a key machine learning computation at near zero energy is a leap forward for future AI systems. This is critical to keep scaling up AI capabilities in years to come.”

Looking Ahead

The work was carried out with collaborators at the Florida Semiconductor Institute, UCLA, and George Washington University. With chipmakers like NVIDIA already experimenting with optical components in their AI hardware, researchers see a direct path to commercial integration.

“In the near future, chip-based optics will become a key part of every AI chip we use daily,” Sorger noted. “And optical AI computing is next.”