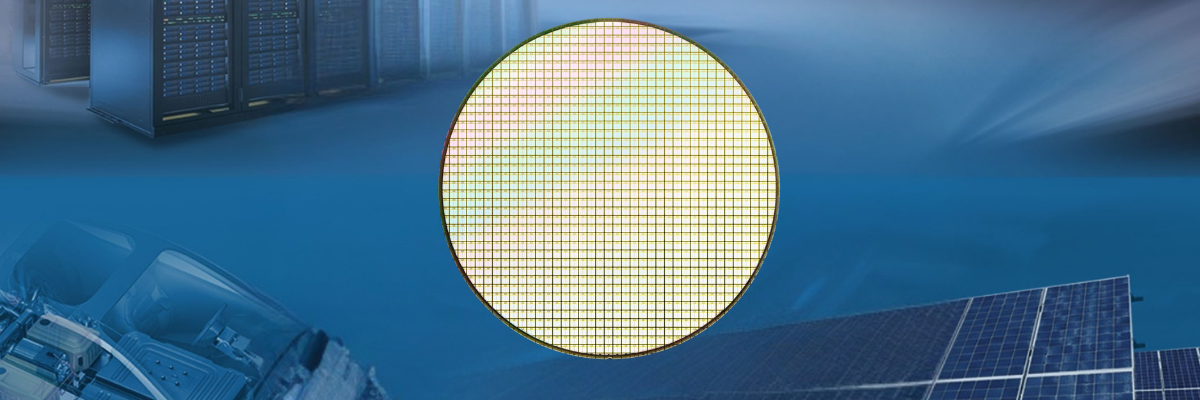

The rapid expansion of artificial intelligence workloads is placing unprecedented pressure on traditional data center infrastructure. Increasing compute demand, grid limitations, cooling requirements, and long construction timelines are forcing the industry to explore alternative deployment models for AI computing resources.

One emerging concept is distributed residential compute infrastructure.

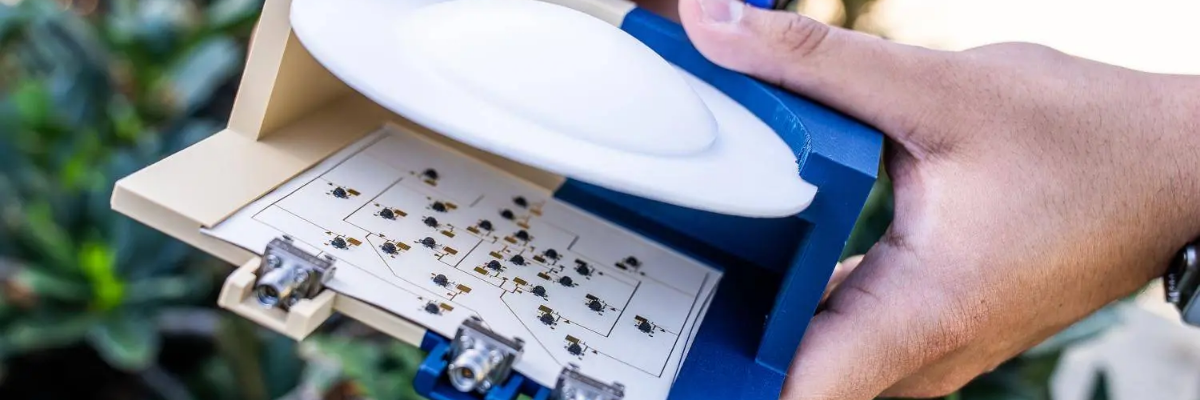

Smart electrical panel company Span, working with NVIDIA and homebuilder PulteGroup, is reportedly developing and testing a system called XFRA that places compact AI compute nodes near residential homes. Rather than relying exclusively on centralized hyperscale facilities, the approach distributes smaller compute systems throughout residential developments.

The concept represents a significant convergence of smart energy systems, residential electrical infrastructure, distributed computing, and AI acceleration technologies.

The Shift Toward Distributed AI Infrastructure

Traditional AI data centers require:

- High-density power delivery

- Advanced thermal management

- Significant land and construction investments

- Grid interconnection approvals

- Long deployment timelines

As AI adoption accelerates, utilities and infrastructure providers are encountering increasing difficulty scaling centralized compute facilities fast enough to meet demand.

Distributed compute architectures attempt to address some of these challenges by deploying smaller compute nodes across wider geographic regions.

In the case of the Span XFRA platform, residential communities may serve as deployment locations for localized AI compute systems integrated with modern home electrical infrastructure.

Why Residential Infrastructure Matters

Modern residential electrical systems are becoming substantially more sophisticated than those installed only a decade ago.

Newer homes increasingly incorporate:

- Smart electrical panels

- Solar generation systems

- Residential battery storage

- EV charging infrastructure

- Intelligent load balancing

- Connected energy management systems

This evolution creates an environment where residential properties can potentially support more advanced grid-interactive applications.

Span’s background in smart panel technology positions the company uniquely within this space. Its existing products already provide:

- Real-time energy monitoring

- Dynamic load management

- Circuit-level control

- Energy prioritization

- Grid-aware automation

Adding distributed compute infrastructure becomes an extension of the broader intelligent energy ecosystem.

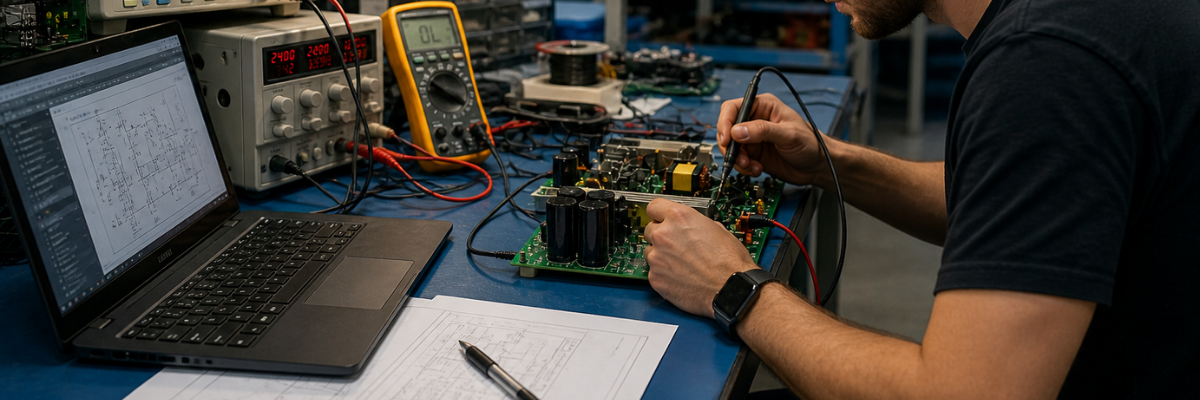

NVIDIA’s Role in Distributed Compute

The involvement of NVIDIA is particularly significant given the company’s dominant role in AI acceleration hardware.

Modern AI systems rely heavily on GPU-based compute architectures for:

- Neural network training

- Inference acceleration

- Computer vision

- Generative AI

- Simulation workloads

- Edge AI processing

Distributed AI infrastructure could allow NVIDIA-based compute systems to operate closer to localized energy resources and potentially reduce pressure on centralized facilities.

This approach may also align with broader industry trends toward:

- Edge computing

- Distributed inference

- Low-latency AI deployment

- Grid-aware compute scaling

- Decentralized infrastructure architectures

Engineering Challenges

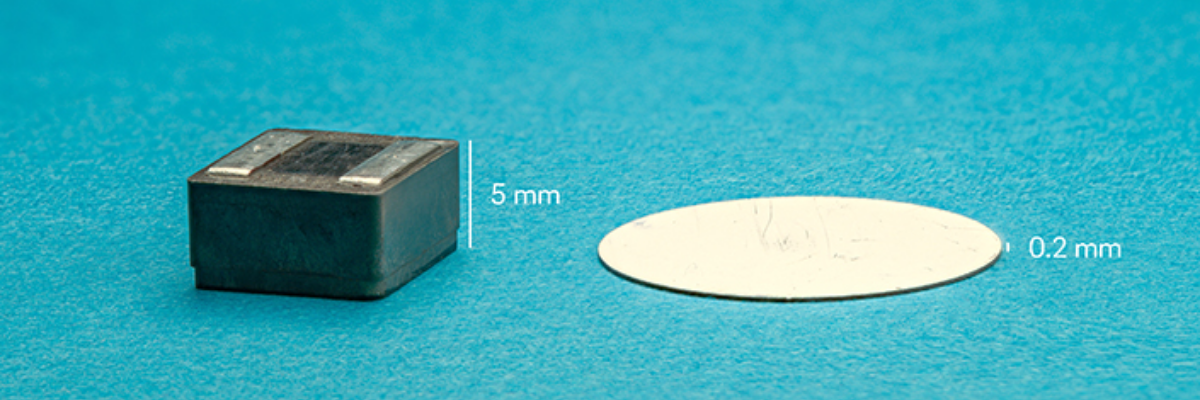

Although the concept is compelling, deploying AI compute systems in residential environments introduces several significant engineering challenges.

Thermal Management

AI accelerators generate substantial heat loads.

Unlike industrial data centers, residential-adjacent systems must address:

- Noise limitations

- Environmental exposure

- Compact form factors

- Passive or low-noise cooling requirements

- Seasonal operating conditions

Thermal efficiency becomes particularly important when integrating compute hardware into suburban or residential settings.

Power Distribution and Grid Interaction

Distributed compute systems may create dynamic load conditions at the neighborhood level.

This introduces considerations involving:

- Peak demand balancing

- Transformer loading

- Power quality

- Demand response coordination

- Utility integration

- Backup power management

Smart electrical infrastructure may be essential for dynamically managing compute loads relative to residential energy usage.

Reliability and Serviceability

Traditional data centers rely on controlled operating environments and centralized maintenance access.

Distributed residential deployments require:

- Simplified maintenance procedures

- Remote diagnostics

- Fault isolation

- High reliability

- Environmental hardening

Service models for residential compute infrastructure remain an open engineering and operational question.

Potential Benefits of Distributed Residential Compute

If successful, distributed AI infrastructure could offer several potential advantages.

Faster Infrastructure Deployment

Large hyperscale facilities can require years of planning, permitting, and construction.

Smaller distributed deployments may enable more incremental scaling.

Improved Grid Utilization

Distributed systems may allow better coordination with:

- Residential solar generation

- Battery storage systems

- Off-peak energy availability

- Demand response programs

Reduced Transmission Constraints

Locating compute resources closer to distributed energy assets could potentially reduce transmission bottlenecks associated with centralized facilities.

Future Smart Neighborhood Integration

The long-term vision may extend beyond compute hosting into broader intelligent infrastructure ecosystems integrating:

- Energy management

- EV charging

- Battery systems

- AI workloads

- Smart appliances

- Grid services

The Broader Industry Trend

The Span XFRA initiative reflects a broader industry movement toward convergence between:

- Energy infrastructure

- Smart homes

- AI compute

- Edge processing

- Distributed systems engineering

As AI workloads continue expanding, traditional infrastructure models may no longer scale efficiently on their own.

Distributed residential compute remains an early-stage concept, but it highlights how future neighborhoods may evolve into active participants within larger intelligent infrastructure networks.

Rather than functioning solely as energy consumers, homes may increasingly become nodes within distributed computational and energy ecosystems.

The collaboration between Span, NVIDIA, and PulteGroup demonstrates how AI infrastructure development is beginning to extend beyond conventional data center architectures.

While substantial engineering, regulatory, and operational challenges remain, the concept of residentially integrated distributed compute illustrates how rapidly the relationship between computing and energy infrastructure is evolving.

As smart electrical systems, distributed energy resources, and AI acceleration technologies continue advancing, residential infrastructure may become an increasingly important component of next-generation compute deployment strategies.