In the 1950s the most common job for American women was a secretary, now into the next century and that still has not changed. Though while women are trying to break through the ‘glass ceiling’, perhaps it’s made of gorilla glass.

As voice recognition software has advanced, virtual assistants have become embedded in consumer technology.

Though these assistants are created by different companies, there is a running trend – they are female, and they are sexualized. Is this because we find female assistance more helpful or approachable? Or do we all just want a Miss. Moneypenny in our pocket? Though arguably it is not so much the fact that the virtual assistant is female that is the issue, rather the response that it has solicited from its human users. Deborah Harrison, an editorial writer in the Cortana division of Microsoft told CNN, that even female virtual assistants can’t escape the dirty and sometimes direspectful minds of their human users. So much so that there are carefully prepared answers to such predictable questions that fall in this category.

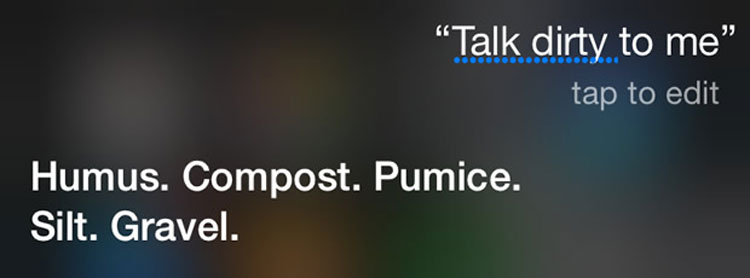

The very fact that these questions have had to be answered, whether or not to engage in the sexual banter or suppress it, then leads to issues of how the assistant comes across. They cannot be too submissive or too dominant, though as Harrison said during a talk at the Re•Work Virtual Assistant Summit in San Francisco, “If you say things particularly a**holeish to Cortana she will get mad.”

Is there too much focus on protecting the creation of the virtual assistant? At the end of the day isn’t it voice recognition software? By anthropomorphising any AI technology, there are unavoidable ethical issues, but the sexist implications of the female virtual assistant add insult to injury when already only 21% of the Core STEM (Science, Technology, Engineering and Mathematics) workforce is made up of women.

Specific examples range from Svedka, the Swedish Vodka brand, using a ‘fembot’ in their advertising, with claims of ‘stimulating V-spots’, to Gatebox’s virtual robot in a jar that offers companionship and offers IoT smart home services – Dominik Bosnjak, Android Headlines, described this one as a, “digital assistant which puts a tiny holographic girlfriend inside of what looks like an expensive coffeemaker.”

Despite the comedy-motivated reasons behind asking Apple’s Siri, Microsoft’s Cortana or Amazon’s Alexa shocking questions in order to see the prepared witty responses, is the very fact there are responses an affirmation, even encouragement, of AI virtual assistants being sexualized? And does anyone else find this quite disturbing?