Deep convolutional neural networks (DCNNs) don’t observe objects as humans do. A York University collaborative study published in the Cell Press journal iScience claims that deep learning models fail to capture the configural nature of human shape perception. This can be dangerous in real-world scenarios.

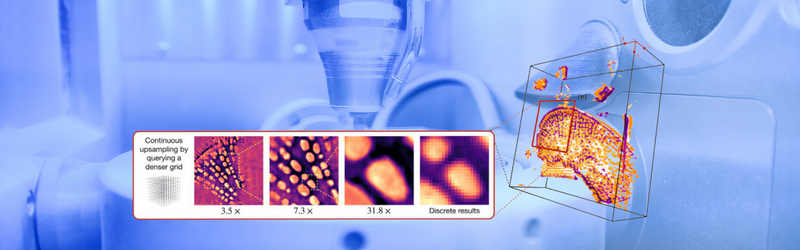

The study used novel visual stimuli called “Frankensteins” to explore how the human brain and DCNNs process holistic, configural object properties. Configural objects have been taken apart, put back together the wrong way, and have all the right local features in the wrong places. The human visual system is confused by Frankensteins, while DCNNs are not – revealing an insensitivity to configural object properties.

Findings are that the deep models tend to take shortcuts when solving complex recognition tasks, which may work in many cases, but can be dangerous in some real-world AI applications. Traffic video safety systems are an example. Vehicles, bicycles, and pedestrians obstruct each other and arrive at a driver’s eye as a jumble of disconnected fragments. The brain needs to correctly group those fragments to identify the correct categories and locations of the objects. An AI system can only perceive the pieces individually and will fail at this task, misunderstanding risks.

Modifications to training and architecture aimed at making networks more brain-like did not lead to configural processing. None of the networks could accurately predict trial-by-trial human object judgments.