In a new paper published today in the journal Science Advances, University of Glasgow researchers describe a new method for creating video using single-pixel cameras. They have found a way to instruct a camera to prioritize objects in images using a method similar to the way brains make the same decisions.

The eyes and brains of humans, and many animals, work in tandem to prioritize specific areas of their field of view. During a conversation, for example, visual attention is focused primarily on the other speaker, with less of the brain’s ‘processing time’ given over to peripheral details. The vision of some hunting animals also works along similar lines.

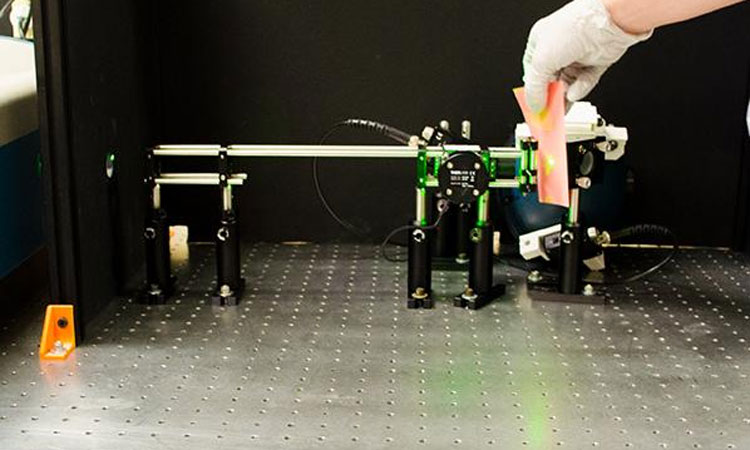

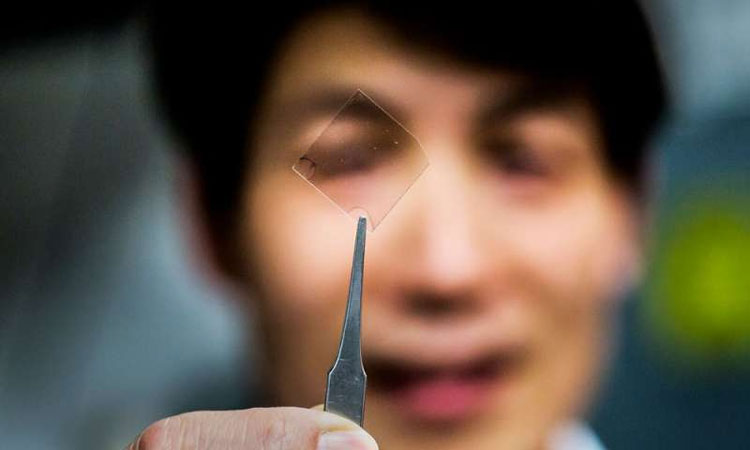

The team’s sensor uses just one light-sensitive pixel to build up moving images of objects placed in front of it. Single-pixel sensors are much cheaper than dedicated megapixel sensors found in digital cameras, and are capable of building images at wavelengths where conventional cameras are expensive or simply don’t exist, such as at the infrared or terahertz frequencies.

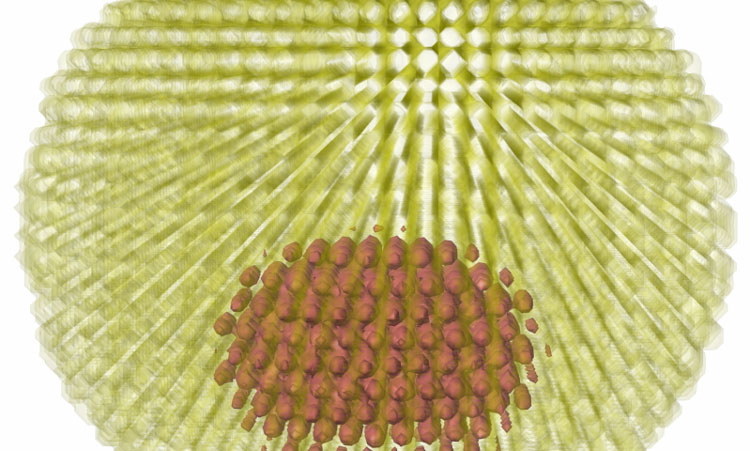

The images the system outputs are square, with an overall resolution of 1,000 pixels. In conventional cameras, those thousand pixels would be evenly spread in a grid across the image. The team’s new system instead can choose to allocate its ‘pixel budget’ to prioritize the most important areas within the frame, placing more higher resolution pixels in these locations and so sharpening the detail of some sections while sacrificing detail in others. This pixel distribution can be changed from one frame to the next, similar to the way biological vision systems work, for example when human gaze is redirected from one person to another.

Dr David Phillips, Royal Academy of Engineering Research Fellow at the University of Glasgow’s School of Physics and Astronomy, led the research.

Dr Phillips said: “Initially, the problem I was trying to solve was how to maximize the frame rate of the single-pixel system to make the video output as smooth as possible.

“However, I started to think a bit about how vision works in living things and I realized that building a program which could interpret the data from our single-pixel sensor along similar lines could solve the problem. By channeling our pixel budget into areas where high resolutions were beneficial, such as where an object is moving, we could instruct the system to pay less attention to the other areas of the frame.

“By prioritizing the information from the sensor in this way, we’ve managed to produce images at an improved frame rate but we’ve also taught the system a valuable new skill.

“We’re keen to continue improving the system and explore the opportunities for industrial and commercial use, for example in medical imaging.”

The research is the latest in a string of single-pixel-imaging breakthroughs from the University’s Optics Group, led by Professor Miles Padgett, which include creating 3D images, imaging gas leaks, and ‘seeing’ through opaque surfaces.