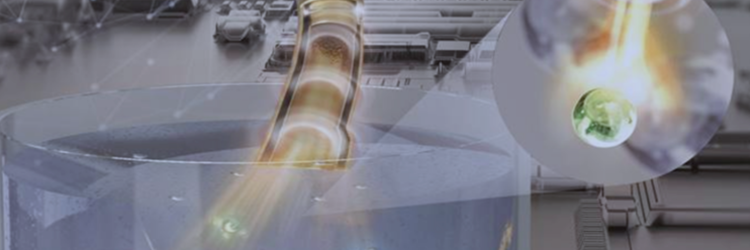

Researchers at the Institute of Chemical Reaction Design and Discovery (WPI-ICReDD), Hokkaido University, led by Professor Yasuhide Inokuma, have developed a machine-learning model that can distinguish the composition ratio of solid mixtures of chemical compounds using only photographs of the samples.

The model was designed and developed using mixtures of sugar and salt as a test case. The team employed a combination of random cropping, flipping, and rotating of the original photographs to create a more significant number of sub-images for training and testing. This enabled the model to be developed using only 300 original photos for training. The trained model was roughly twice as accurate as the naked eye of even the most expert member of the team.

After the successful test case, researchers applied this model to evaluate different chemical mixtures. The model successfully distinguished different polymorphs and enantiomers, both of which are highly similar versions of the same molecule with subtle differences in atomic or molecular arrangement. Determining these subtle differences is essential in the pharmaceutical industry and usually requires a more time-consuming process.

The model even handled more complex mixtures, accurately assessing the percentage of a target molecule in a four-component combination. Reaction yield was also analyzed, determining the progress of a thermal decarboxylation reaction.

The team further demonstrated the versatility of their model, showing that it could accurately analyze images taken with a mobile phone after supplemental training was performed. The researchers anticipate various applications in the research lab and industry.

“We see this as applicable when constant, rapid evaluation is required, such as monitoring reactions at a chemical plant or as an analysis step in an automated process using a synthesis robot,” explained Specially Appointed Assistant Professor Yuki Ide. “Additionally, this could act as an observation tool for impaired vision.”