This sounds like something out of the 60s. Could sensing ambient magnetic fields change how we see the world? How would we speak if we could see the physiological effects our words had on others? Would abstract art based on human brain scans shift our understanding of creativity and imagination?

Four students at the Harvard John A. Paulson School of Engineering and Applied Sciences (SEAS) and MIT Media Lab are tackling the questions. Aida Baradari, Alice Cai, Dünya Baradari, and Treyden Chiaravalloti co-founded and run the Augmentation Lab, a student-led organization using technology to explore and expand human experience, interaction, and creative expression. The team organized a 10-week residency in San Francisco where eight undergraduate and graduate students and young professionals built projects on the intersection of humanism and machines.

“We had a vision for how technology could be developed differently and how we could improve our relationship to technology,” said Alice Cai, a third-year student pursuing a special concentration in Human Augmentation. “We felt that going into the future, humanity could use more thoughtful development and augmentation technologies that truthfully improve your life. We approached this residency model with the idea of self-experimentation, where you can implement the technologies, you’ve built in your own life to experientially understand their effect.”

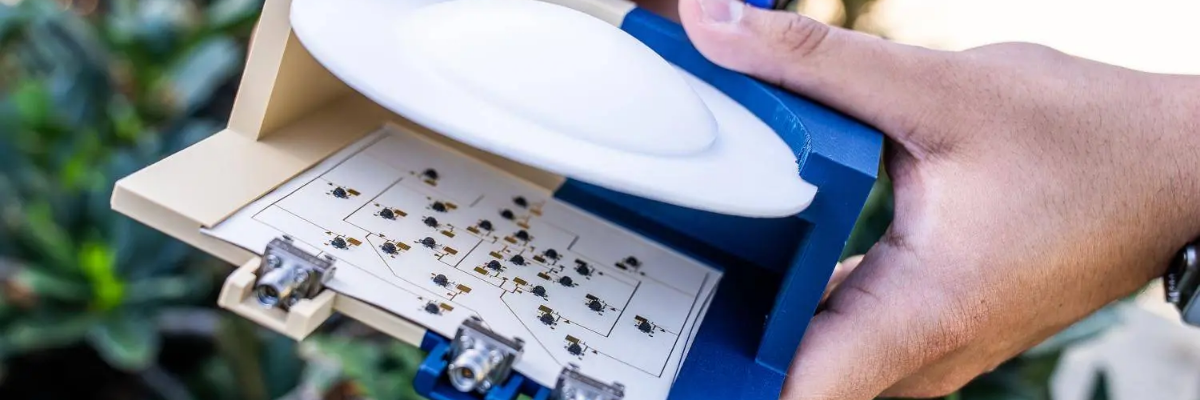

Residents would often begin working on their projects upon waking and spend all day working. The evenings often featured a communal dinner where residents would discuss existential questions they’d written down and placed in a hat. They had 3D printers, oscilloscopes, soldering stations, extended reality equipment, a brain-computer interface headset, and more.

Their final products included mixtures of hardware, software, and storytelling. The Haptic Ring is a sensory augmentation wearable device that vibrates in the presence of magnetic fields. Enact is an artificial intelligence (AI) web application that guides users in roleplaying difficult conversations. Emothesia uses an augmented reality headset to display another person’s biological data, such as heart rate or temperature. The Imagination Engine scans brainwaves with an EEG headset, then feeds the data to a generative AI program to create abstract art. GameChanger aims to help people change by prompting users to engage in Fluxus-inspired experiments in daily life. The Empathy Machine stimulates the wearer’s muscle activity based on someone else’s muscle movements, mimicking a phenomenon called mirror-touch synesthesia.

The team began developing the residency last March. They’d already collaborated on several augmentation projects, including a robotic limb they presented at the spring SEAS Design Fair. Although the timeline was tight, they secured funding and selected technologists Inga Zhuravleva, Jennifer Huang, Aeden Cullen, and Ellery Buntel from nearly 200 applicants.

Many members of the Augmentation Lab’s leadership team are now at Harvard or MIT, including SEAS computer science students Caine Ardayfio and Aryan Naveenso. The lab is looking to further grow throughout the school year. They plan to publish essays and op-eds about the future of technology, develop new technologies and continue to gather and encourage interdisciplinary conversation between artists, technologists, policy makers, and beyond.