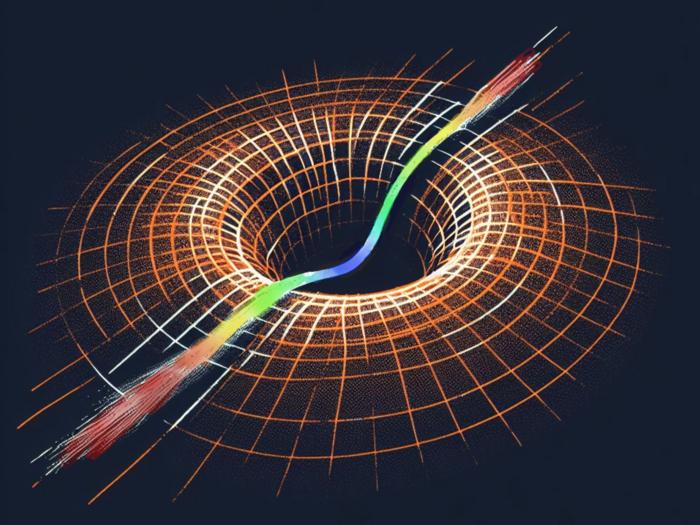

There’s a novel deep-learning approach to simplify the creation of holograms. With it, 3D images can be generated directly from 2D photos from a standard camera. The method streamlines hologram generation using a sequence of three deep neural networks, outperforming today’s high-end graphics processing units in speed. It eliminates such expensive equipment as RGB-D cameras after the training phase, so it’s less costly. Applications include high-fidelity 3D displays and in-vehicle holographic systems.

A team of researchers led by Professor Tomoyoshi Shimobaba of the Graduate School of Engineering, Chiba University, published the results recently in the journal Optics and Lasers in Engineering.

The approach uses three deep neural networks (DNNs) to transform a regular 2D color image into data that can be used to display a 3D scene or object as a hologram. The first DNN uses a color image captured using a regular camera as the input and then predicts the associated depth map, providing information about the 3D structure of the picture. A second DNN uses the original image and the depth map to generate a hologram. A third DNN refines the hologram generated by the second DNN, making it suitable for display.

In the future, this approach can find potential applications in heads-up and head-mounted displays and revolutionize the generation of an in-vehicle holographic head-up display that presents the necessary information on people, roads, and signs to passengers in 3D.