Humans perceive reality via touch. Haptics that produce extremely specific vibrations that mimic touch sensations are a way to bring that third sense to life. The challenge is that humans are incredibly particular about something feeling “right,” and virtual textures don’t always hit the mark.

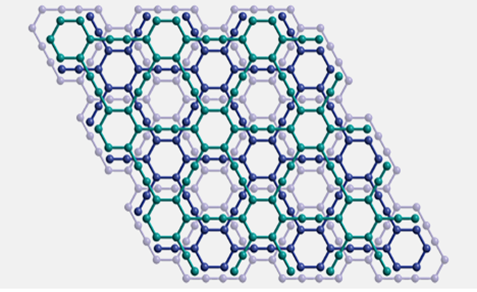

Researchers at the USC Viterbi School of Engineering developed a new method for computers to achieve that authentic texture — called a preference-driven model. The framework uses the ability to distinguish between the details of certain textures as a tool to give these virtual counterparts a tune-up.

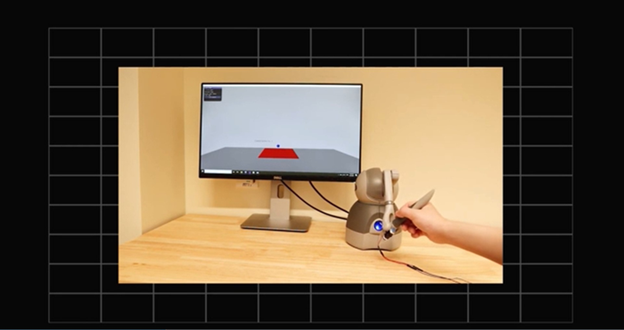

Published in IEEE Transactions on Haptics by three USC Viterbi Ph.D. students, their model iteratively updates a virtual texture to match the real one in the end. Using this preference-driven model, researchers give the user an actual texture. The model randomly generates three virtual textures using dozens of variables. The user can then pick the one that feels the most similar to the real thing. Over time, the search gets closer and closer to what the user prefers.

The user only has to choose what texture matches best and adjust the amount of friction using a simple slider. Friction is essential to how we perceive textures, and its perception varies from person to person.

Their work comes just in time for the emerging market for specific, accurate virtual textures. Everything from video games to fashion design integrates haptic technology, and this user preference method can improve the existing databases of virtual textures.

This especially comes in handy for virtual textures used in training for dentistry or surgery, which need to be highly accurate.