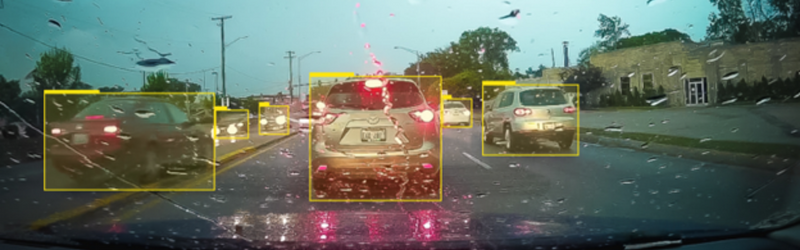

The most significant concerns surrounding autonomous vehicles include safety and reliability. The vehicles must accurately, effectively, and efficiently monitor and distinguish their surroundings, including potential threats to passenger safety.

Using sensors, such as Light Detection and Ranging (LiDaR), radar, and RGB cameras to produce large amounts of data, quick and accurate processing and interpretation of this collected information is critical.

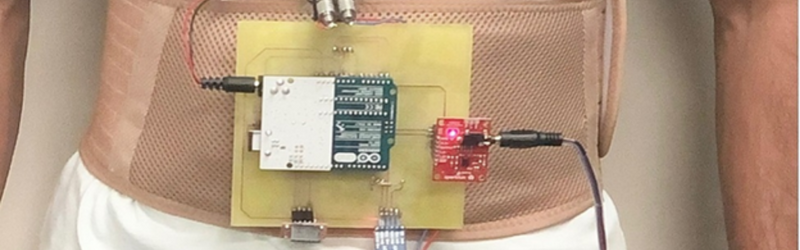

In a recent study published in the IEEE Transactions of Intelligent Transport Systems journal, researchers from Incheon National University, Korea, developed a smart IoT-enabled end-to-end system for 3D object detection in real-time based on deep learning.

The researchers fed collected RGB images and point cloud data as input to YOLOv3, which, in turn, output classification labels and bounding boxes with confidence scores. They then tested its performance with the Lyft dataset. Results revealed that YOLOv3 achieved an extremely high detection accuracy (>96%) for 2D and 3D objects, outperforming other state-of-the-art detection models.

The method can be applied to autonomous vehicles, autonomous parking, autonomous delivery, and future autonomous robots, as well as in applications where object and obstacle detection, tracking, and visual localization are required.