If a machine-learning model is biased, it may be impossible to remove this bias later. If, for example, an unbalanced dataset focuses on skin color, predictions will be both unfair and unreliable in the real world.

MIT researchers have found that machine-learning models popular for image recognition tasks can encode bias when trained on unbalanced data. When that happens—fixing it down the road is virtually impossible.

So, the researchers came up with a new fairness technique. When used, the model can produce fair outputs even when trained on unfair data. The team will present the research at the International Conference on Learning Representations.

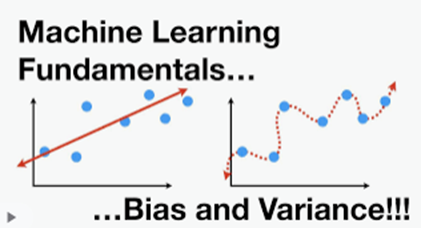

The technique is known as deep metric learning, whereby a neural network learns the similarity between objects by mapping similar photos close together and dissimilar photos far apart. The neural network maps images in an “embedding space” where a similarity metric between photos corresponds to their distance.

The researchers ran several experiments on models with unfair similarity metrics and could not overcome the bias the model had learned in its embedding space. The only way to solve this problem is to ensure the embedding space is fair to begin with.

Their solution, called Partial Attribute Decorrelation (PARADE), involves training the model to learn a separate similarity metric for a sensitive attribute and then decorrelate from the targeted similarity metric. Because the user can control the decorrelation between similarity metrics, this method is applicable in many situations.

Moving forward, researchers are interested in studying how to force a deep metric learning model to learn good features in the first place.