NASA’s Goddard Space Flight Center’s optical navigation team, as backup to the OSIRIS-REx mission to near-Earth asteroid Bennu, double-checked the primary navigation team’s work, proving the viability of navigation by visual cues.

Using observations from cameras, lidar, or other sensors, the team works by taking pictures of a target and identifying landmarks on the surface. Goddard Image Analysis and Navigation Tool (GIANT) software analyzes the images for such information as precise distance to the target and to develop three-dimensional maps of potential landing zones and hazards. It can also calculate the target’s mass and determine its center, which is important when trying to enter an orbit.

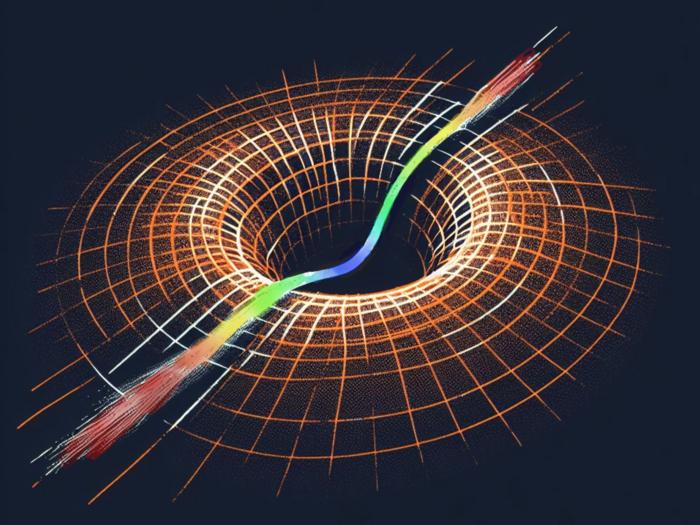

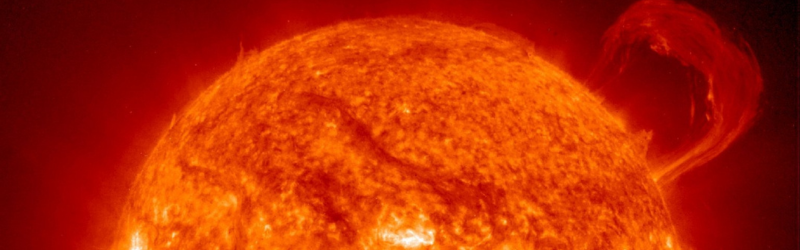

During OSIRIS-REx’s orbit, GIANT identified particles flung from the asteroid’s surface, and the team used images to calculate the particles’ movement and mass. They have now expanded GIANT’s collection of software utilities and scripts. New developments include an open-source version of the software released to the public and celestial navigation for deep space travel by observing stars, the Sun, and solar system objects. A slimmed-down package to aid in autonomous operations throughout a mission’s life cycle is in the works, as is adapting the software to aid rovers and human explorers on the surface of the Moon or other planets.

Shortly after OSIRIS-REx left Bennu, Liounis’ team released a refined, open-source version for public use. An intern modified code to use a graphics processor for ground-based operations, boosting image processing of GIANT’s navigation. A simplified version called cGIANT works with Goddard’s autonomous Navigation, Guidance, and Control software package for both small and large missions.

Traditional deep space navigation uses the mission’s radio signals to determine location, velocity, and distance from Earth. Reducing reliance on NASA’s Deep Space Network frees up a valuable resource shared by many ongoing missions.