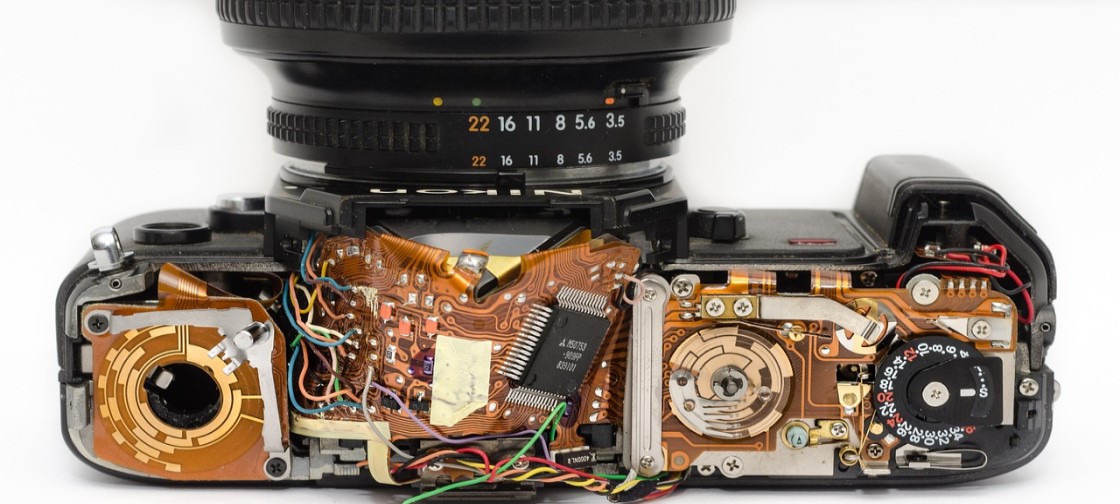

Last year, leading consumer electronics manufacturers and brands Foxconn, Nikon, Scenera, Sony Semiconductor Solutions and Wistron collaborated to create an ecosystem standard for smart camera markets, dubbing the group the “Network of Intelligent Camera Ecosystem (NICE) Alliance.

Launched to provide an open data sharing platform based on video/image and AI to enhance the synergistic effect of multi-brand, multi-camera, and multi-service/apps, the consortium has since grown, with Allion, Augentix, Mobilicom and TnM Tech joining as contributors.

After a year of collaboration and joint development, NICE Alliance released the first public review of specifications and detailed features on its blueprint and technology, with a goal to publish a finalized version for formal adoption by the second half of 2019.

Founded on a vision to propel and advance real-time video image analytics to bring forth the next era of smart cameras to the consumer and enterprise markets, the NICE Alliance aligns with leading manufacturers and suppliers including sensor makers, camera modules, and cloud system solutions to drive adoption across the industry.

Currently, the management of Artificial intelligence (AI) processing has been limited within cloud-based data centers, which require a heavy computing capacity and intensive training of deep learning models.

Providing a solution to the issues surrounding security, data privacy, bandwidth, latency requirements and cost constraints, NICE has identified the need to develop decentralized architectures, enabling AI processing to be performed on edge devices such as cameras and on advanced image sensors. The new process ultimately creates a distribution of AI capabilities for efficient and formal sharing on the cloud, the camera or IoT devices and image sensors.

With the massive increase of high-resolution camera products in the market, the amount of generated raw video data has become too large for practical streaming. With the difficulty of predicting the nature of video images and managing the huge volumes of video data (which only carry a small amount of relevant video information), existing standard compression technology has yet to address this problem. NICE’s edge AI capabilities provide a tangible solution and increases efficiency by sorting images on the edge, ensuring that only relevant video information is sent to the cloud.

While the need for balancing between the edge and the cloud is clear, building this needed distributed AI network requires the industry to address the challenge of sharing AI tasks between the edge and the cloud.

NICE Alliance is publishing a specification that can capture scene-based images or video streams containing an abundance of specific information, such as image frames, audio, and metadata, in cameras. The NICE Specification defines a new standard way for camera devices, cloud services, and apps to communicate with each other and establish an effective solution, enabling a new class of utility services for consumers and creating new opportunities and business models for emerging applications.

Key features of NICE include secured camera and application management, a scene-based application interface, a layered camera interface, and distributed AI management.

Seamlessly integrating the standardization of advanced IP cameras in surveillance and IoT markets with cloud-based machine-learning and AI applications will require participation from broad ecosystem players, including sensor makers and big data AI solution providers.

NICE manufacturing adopters expect availability and longevity of third-party applications and services for different classes of cameras, focusing on innovating camera features and improving performance.

AI-based video analytics is rapidly advancing and improving in the cloud. Many industry experts agree that one of the major features of the NICE specs is the ability to distribute AI computing across sensors, cameras and in the cloud by defining a new layered control, ultimately utilizing virtualization between layers. This key attribute allows cloud apps and services to more effectively capture appropriate images from sensors and further analyze data in the camera at very low latency, satisfying end-user demands cost-effectively.