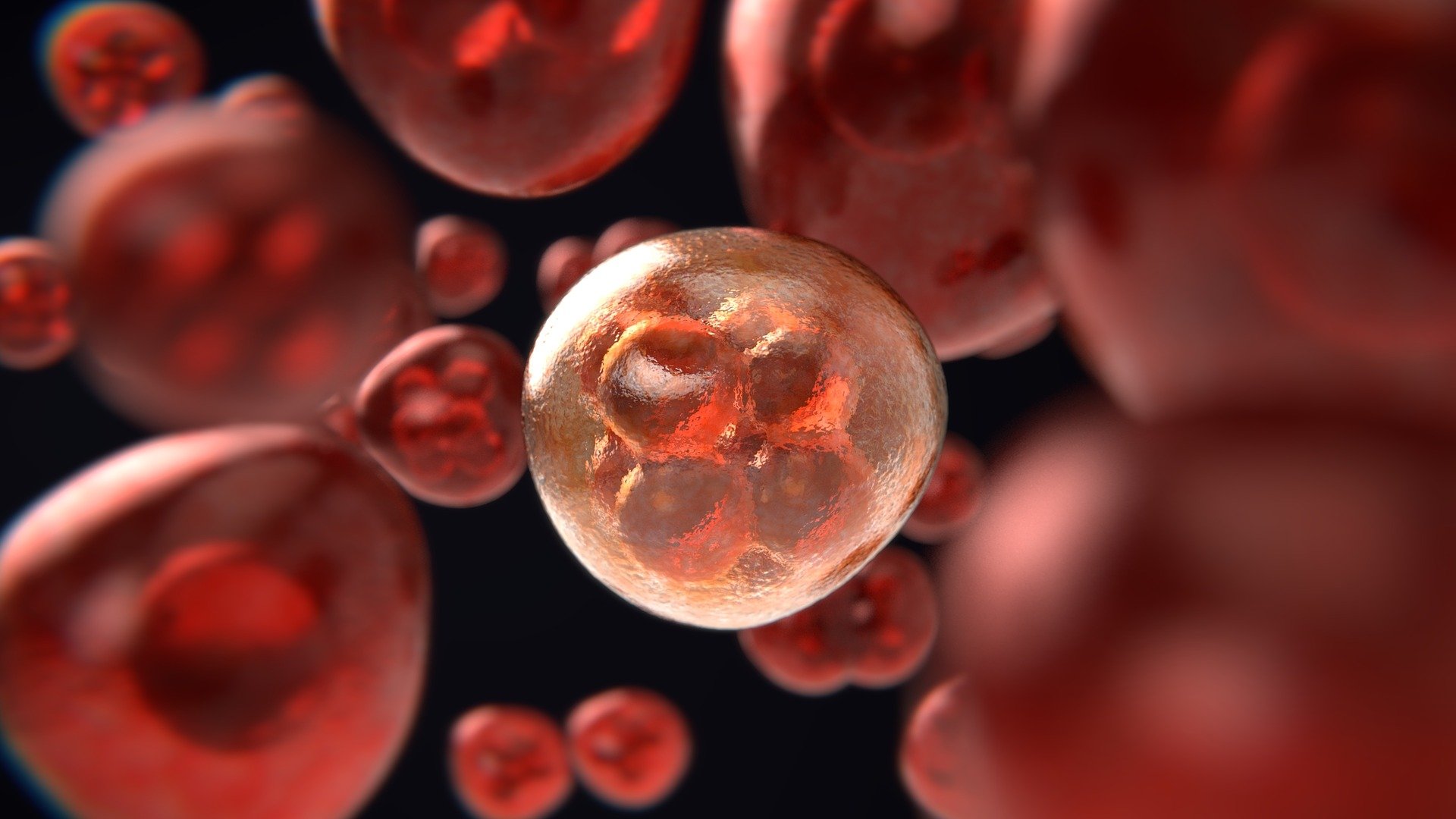

Skoltech researchers have demonstrated certain patterns that can cause neural networks to make mistakes in recognizing images are similar to Turing patterns which are ubiquitous in the natural world. The discovery can be used to design defenses for pattern recognition systems that are vulnerable to attacks. The research was presented at the 35th AAAI Conference on Artificial Intelligence (AAAI-21).

Deep neural networks can still be vulnerable to what’s called adversarial perturbations, small but peculiar details in an image that cause errors in output. These perturbations represent a significant security risk, an example of which is the 201u8 published description of a way to trick self-driving vehicles into “seeing” benign ads and logos on them as road signs.

Professor Ivan Oseledets leads the Skoltech Computational Intelligence Lab at the Center for Computational and Data-Intensive Science and Engineering (CDISE). Oseledets and Valentin Khrulkov presented a paper on generating UAPs at the Conference on Computer Vision and Pattern Recognition in 2018 where a stranger recognized that they looked like Turing patterns. Skoltech master students Nurislam Tursynbek, Maria Sindeeva and PhD student Ilya Vilkoviskiy formed a team that was able to solve this puzzle.

There is prior research showing that natural Turing patterns can fool a neural network, and the team was able to show this connection and provide ways of generating new attacks. The simplest setting to make models robust based on such patterns is to merely add them to images and train the network on perturbed images, according to the researchers.