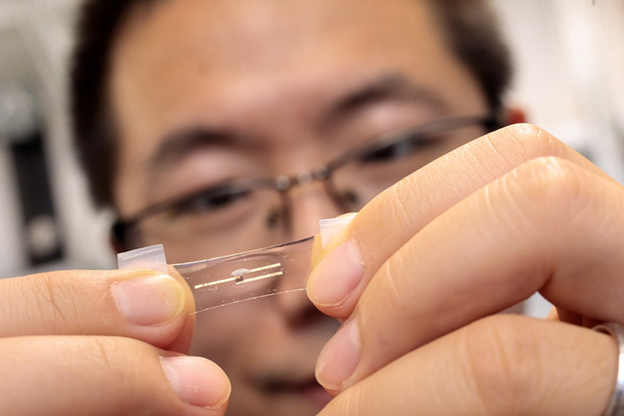

While AI-powered edge computing is pervasive in our lives, batteries limit the AI functionality on these tiny edge devices. In AI chips, data processing and storage happen at separate places – a compute unit and a memory unit. The data movement between the units consumes most of the energy. Engineers at Stanford created a more efficient and flexible AI chip, which could bring the power of AI into tiny edge devices.

The novel resistive random-access memory (RRAM) chip does AI processing within the memory itself, eliminating the separation between the compute and memory units. Called NeuRRAM, it is about the size of a fingertip and does more work with limited battery power than current chips. The chip is the first to demonstrate a broad range of AI applications on hardware vs. through simulation alone.

To overcome the data movement bottleneck, researchers used compute-in-memory (CIM), a novel chip architecture that performs AI computing directly within memory rather than in separate computing units. The memory technology is resistive random-access memory (RRAM). RRAM can store large AI models in a small area, consuming very little power, making them perfect for small and low-power edge devices.

Testing the chip, the engineers found that it’s 99% accurate in letter recognition from the MNIST dataset, 85.7% accurate on image classification from the CIFAR-10 dataset, 84.7% accurate on Google speech command recognition, and showed a 70% reduction in image-reconstruction error on a Bayesian image recovery task.

NeuRRAM is now a physical proof-of-concept that needs more development before it’s ready to be translated into actual edge devices. If mass-produced, however, these chips would be cheap enough, adaptable enough, and low-power enough that we could use them to advance technologies already improving our lives.