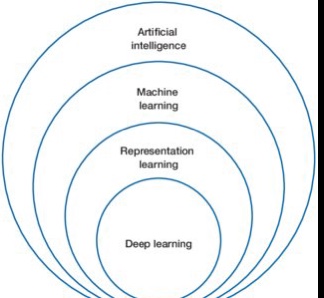

When we think of machine learning, we see the AI model progressively make decisions based on millions of data inputs and cumulative reasoning, however unlike humans, decisions are made one at a time and then evaluated manually.

A research team from MIT and IBM Research created a method enabling a user to aggregate, sort, and rank these individual explanations to rapidly analyze a machine-learning model’s behavior. Called Shared Interest, the model incorporates quantifiable metrics that compare how well a model’s reasoning matches that of a human.

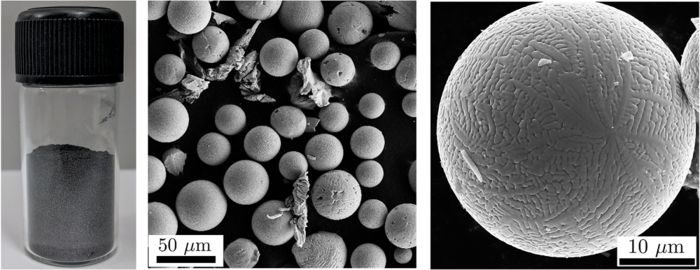

Shared Interest could help uncover trends in a model’s decision-making. It leverages techniques that show how a machine-learning model made a specific decision, known as saliency methods. These areas are visualized as a type of heatmap, called a saliency map, often overlaid on the original image. Shared Interest works by comparing saliency methods to ground-truth data. In an image dataset, ground-truth data are typically human-generated annotations that surround the relevant parts of each image.

For more information, visit the team’s paper at: “Shared Interest: Measuring Human-AI Alignment to Identify Recurring Patterns in Model Behavior”

https://www.eurekalert.org/news-releases/948925