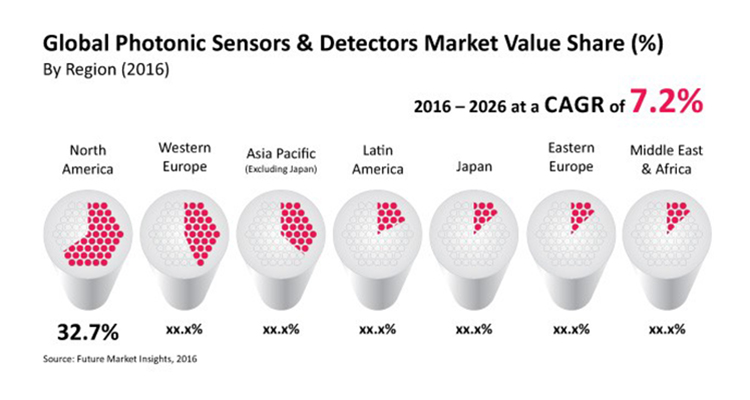

Key insights on the global photonic sensors and detectors market have been delivered by Future Market Insights in a new report titled ‘Photonic Sensors and Detectors Market: Global Industry Analysis & Opportunity Assessment, 2016-2026’. The global photonic sensors and detectors market was valued at $22.5bn in 2015 and is expected to register a CAGR of 7.2% during the forecast period (2016–2026).

According to Future Market Insights, increasing investments in telecommunication infrastructure and various government initiatives to implement fiber optic communication are major factors driving the growth of the global photonic sensors and detectors market.

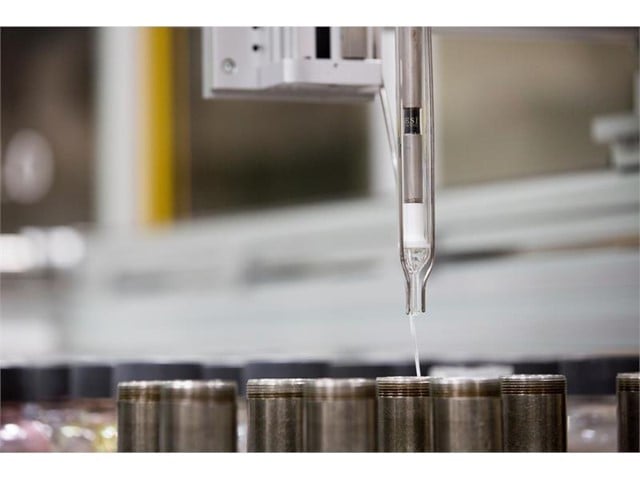

Additionally, increasing adoption of industrial automation solutions to address the global shortage of unskilled labor and to reduce operating costs, and continuous development and implementation of new technologies in medical devices are some of the other factors propelling the growth of the global photonic sensors and detectors market.

An analyst of Electronics and ICT at Future Market Insights stated: “Leading players in the global photonic sensors and detectors market are faced with major challenges that force them to reduce profit margins from image sensors, thereby affecting profitability. Also, a lengthy regulatory approval and technology assessment process pertaining to new product development is likely to restrict growth of the global photonic sensors and detectors market during the forecast period.”

Segmentation highlights

The global photonic sensors and detectors market is segmented on the basis of Sensor Type (Fiber Optic Sensors, Biophotonic Sensors, Image Sensors, Others); Detector Type (Photo Transistors, Single Photon Counting Modules, Photodiodes, Photocells, Others); and End use Sector (Defense & Security, Medical & Healthcare, Chemicals & Petrochemicals, Consumer Electronics & Entertainment, Industrial Manufacturing, Aviation, Research & Development, Others).

- The Fiber Optics Sensors segment is estimated to account for a market share of 37.7% by the end of 2016 while the Biophotonic Sensors segment is expected to be the second largest segment in terms of market share in 2016, accounting for 29.9% value share by the end of 2016.

- In terms of sales volume, the Single Photon Counting Modules segment is expected to register the highest CAGR of 10.5% during the forecast period. The Photo Transistors segment is estimated to witness a CAGR of 7.0% between 2016 and 2026.

- The Aviation segment is anticipated to register high YoY growth rates from 2016 to 2026 and is expected to register a CAGR of 10.2% during this period while the Defense & Security segment is estimated to account for the highest market value share of 26.3% by the end of 2016.

Regional market projections

The global photonic sensors and detectors market is segmented on the basis of region into North America, Latin America, Western Europe, Eastern Europe, APEJ, Japan, and MEA. The North America market accounted for the highest market value share in 2015 and was valued at $7.4bn in 2015. North America is estimated to account for 32.7% value share of the global photonic sensors and detectors market by the end of 2016. The APEJ market is projected to be the most attractive market in the global photonic sensors and detectors market during the forecast period. The APEJ market is anticipated to be valued at $4.4bn by the end of 2016 and this is expected to increase to $11.4bn by the end of 2026.

Vendor insights

The report features some of the leading companies operating in the global photonic sensors and detectors market. Key players profiled in the report are Hamamatsu Photonics K.K, OMRON, ON Semiconductor, SAMSUNG, Sony, KEYENCE, Pepperl+Fuchs, Prime Photonics, Banpil Photonics, and NP Photonics.

Leading companies in the global photonic sensors and detectors market are expanding their presence in emerging markets and are diversifying their product portfolio to strengthen their market share and enhance their customer base.