There’s been a lot of discussion around AI replacing engineers. In some areas—especially software and routine design work—that concern isn’t entirely unfounded.

But not every discipline is exposed in the same way.

Power engineering sits much closer to physical infrastructure, safety constraints, and systems that don’t behave perfectly outside of simulations. That changes how AI is used. Instead of replacing engineers, it’s starting to change how the work gets done—and in some cases, it’s increasing the amount of work that needs to be done.

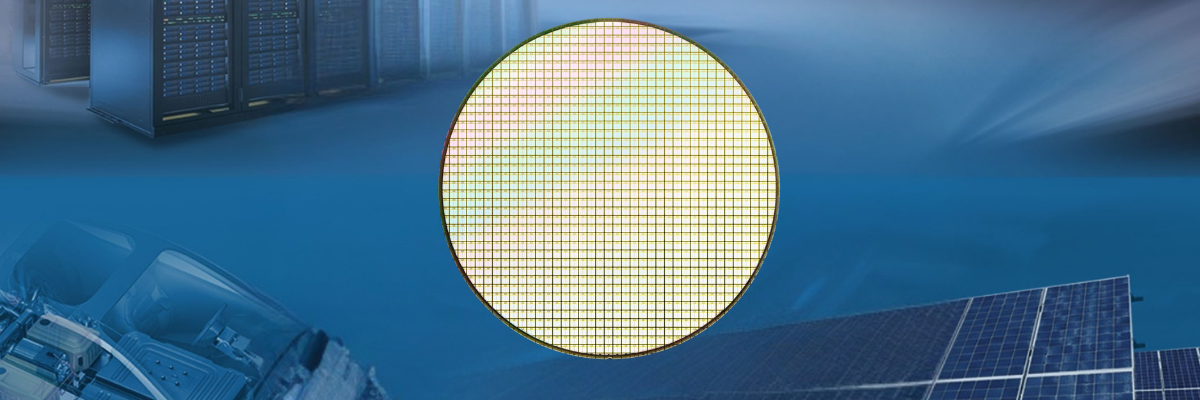

One of the biggest shifts is coming from demand. AI itself is driving a surge in electricity use, particularly through large-scale data centers. These facilities operate at tens to hundreds of megawatts and require stable, high-quality power with minimal interruption. That pressure lands directly on engineers working in transmission planning, substation design, and medium-voltage distribution.

Utilities and developers are dealing with more complex interconnection studies, tighter timelines, and heavier loads being added to existing infrastructure. Engineers are running more load flow studies, more contingency analyses, and more coordination across systems just to keep up. That’s not work that disappears—it grows.

Where AI starts to make a difference is in the repetitive analysis underneath these decisions.

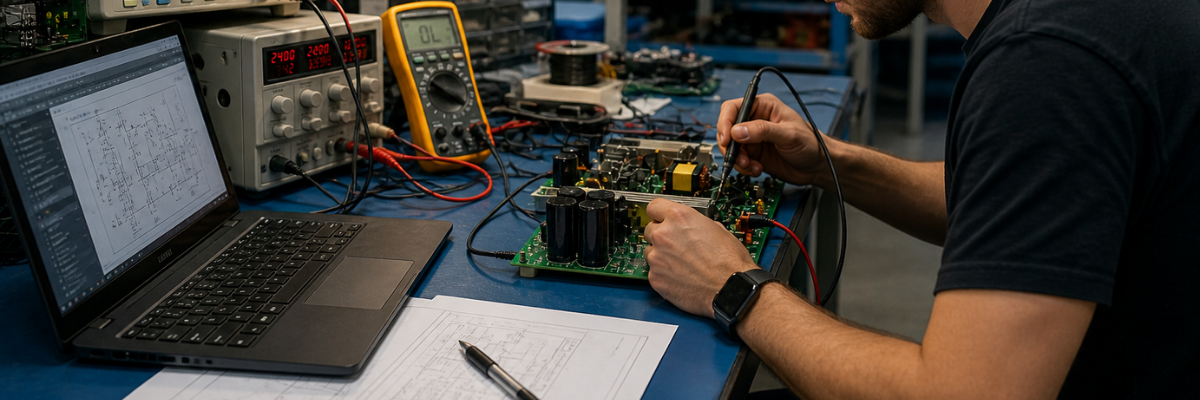

In power system studies—using tools like ETAP, PSCAD, or DIgSILENT—engineers typically define a set of operating conditions and run simulations to evaluate system behavior. That includes peak load scenarios, fault conditions, and equipment outages. Each variation requires setup, execution, and review.

Newer tools can run through a wider range of these scenarios automatically. They can flag voltage violations, identify worst-case fault currents, and surface combinations of events that might otherwise be missed. The engineer still has to validate and interpret the results, but getting to those results takes less time.

The same pattern is starting to show up in protection engineering.

Relay coordination studies involve setting time-current curves so that protective devices trip in the correct order during a fault. In a substation or industrial system, that can mean coordinating dozens of relays across feeders, transformers, and incoming lines.

Software can now assist by suggesting initial settings or highlighting where coordination breaks down. That speeds up the process, especially in larger systems. But final decisions still depend on system knowledge—fault levels, equipment ratings, and how conservative the protection scheme needs to be for a given application.

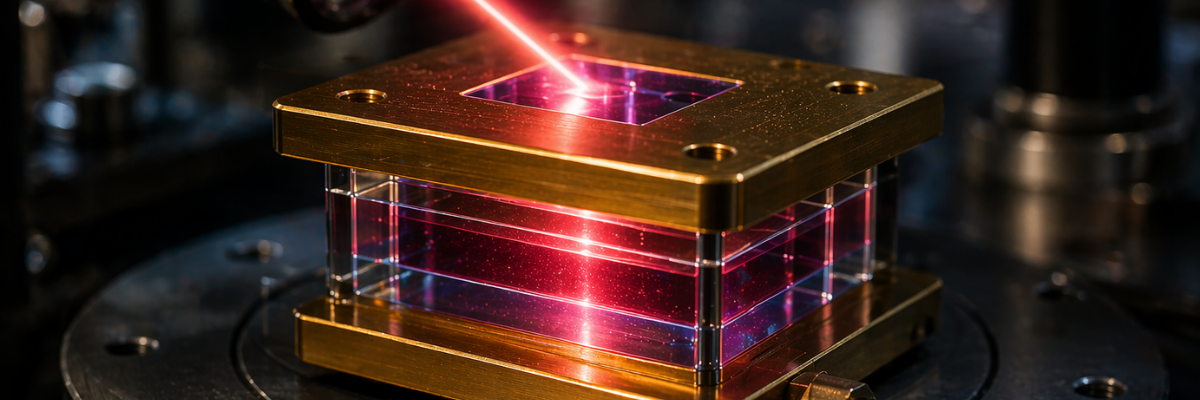

In power electronics, particularly with SiC and GaN devices, design tools are becoming more capable of handling multi-variable optimization. Engineers working on EV inverters, DC fast chargers, or server power supplies can evaluate switching losses, thermal behavior, and efficiency across different operating points more quickly than before.

These tools help explore the design space, but they don’t define the requirements. Decisions about cost targets, thermal limits, and long-term reliability still come from the engineer.

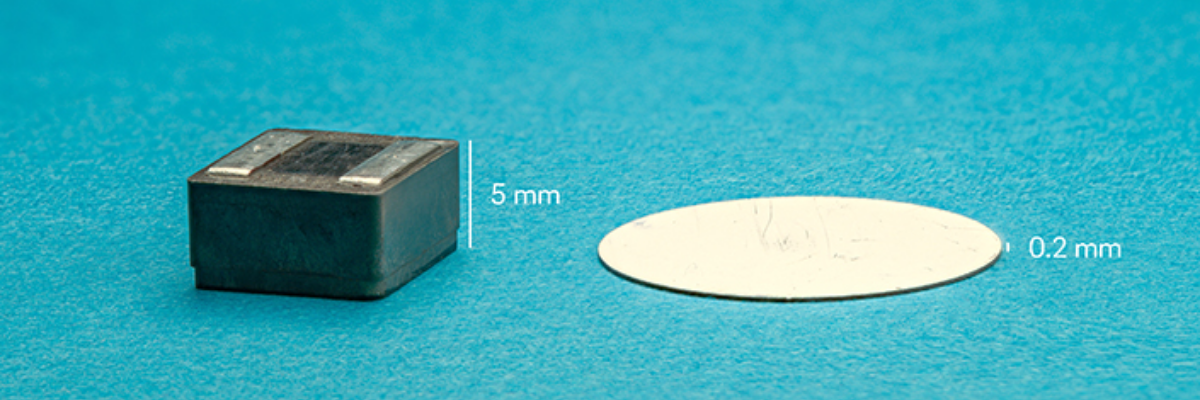

There’s also growing use of AI in predictive maintenance.

Utilities and industrial operators are monitoring assets like transformers, breakers, and cable systems using data from temperature sensors, dissolved gas analysis, and electrical measurements. Patterns in that data can indicate early signs of failure.

For example, rising gas levels in a transformer can point to insulation breakdown. Irregular breaker operation times can signal mechanical wear. Partial discharge activity can indicate degradation in cable insulation. These systems help prioritize maintenance, but they don’t eliminate the need for engineers to diagnose and act on those findings.

Grid operations are another area seeing steady changes.

As more distributed energy resources—solar, wind, and battery storage—are added to the grid, balancing supply and demand becomes more complex. Software can assist with forecasting and dispatch, helping operators maintain frequency and voltage stability under changing conditions.

But when conditions fall outside expected ranges—a fault, a sudden loss of generation, or extreme weather—human oversight remains critical. Power systems don’t always behave the way models predict, especially under stress.

That physical reality is what limits full automation.

Equipment ages. Installations vary. Environmental conditions change. A relay misoperation or protection failure isn’t just a modeling error—it can lead to equipment damage or outages. Engineers need to understand not just how the system is supposed to behave, but how it might behave when something goes wrong.

Because of that, the role isn’t disappearing. It’s shifting.

Less time is spent running standard calculations or formatting results. More time goes into reviewing outputs, understanding system interactions, and making decisions under uncertainty.

There’s also a growing difference between engineers who use these tools effectively and those who don’t. Someone working in substation design who can quickly evaluate multiple grounding strategies or protection schemes has a clear advantage over someone doing the same work manually.

The broader trend points in one direction. Electrification is expanding into transportation, heating, and industrial systems. Data centers continue to scale. Renewable integration is adding variability to grid behavior.

Each of these trends increases system complexity.

AI helps manage parts of that complexity by speeding up analysis and surfacing information earlier in the design process. But it doesn’t remove the need for engineering judgment.

Power engineering has always been about keeping systems stable, efficient, and safe under real-world conditions. That responsibility doesn’t shift to software. It stays with the engineer—just with better tools than before.