Machine learning takes time, exposure to massive data sets, and a lot of computing power to handle tremendous volumes of data.

In 2016, a supercomputer beat the world champion in Go, a complicated board game. How? By using reinforcement learning, a type of artificial intelligence whereby computers train themselves after being programmed with simple instructions. The computers learn from their mistakes and, step by step, become highly powerful.

The main drawback to reinforcement learning is that it can’t be used in some real-life applications. That’s because in the process of training themselves, computers initially try just about anything and everything before eventually stumbling on the right path. This initial trial-and-error phase can be problematic for certain applications, such as climate-control systems where abrupt swings in temperature wouldn’t be tolerated.

Learning the driver’s manual before starting the engine

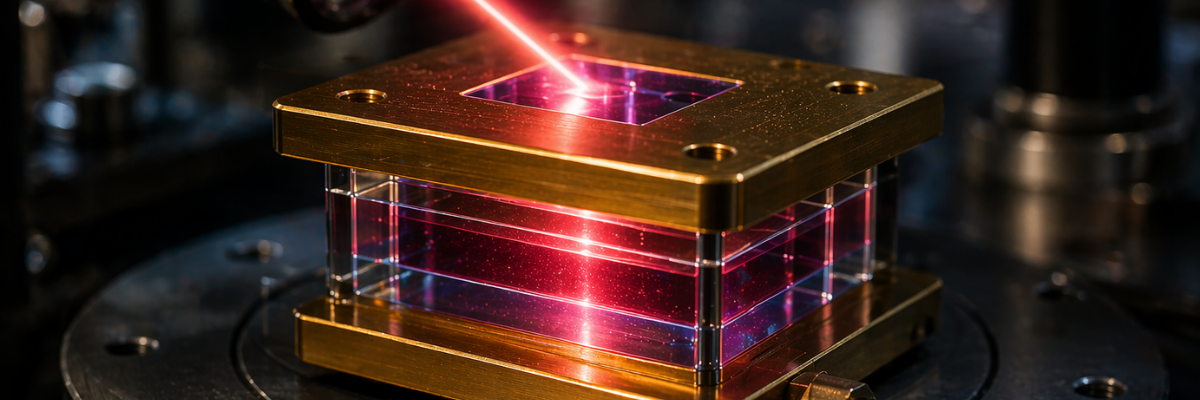

Swiss Center for Electronics and Microtechnology (CSEM) engineers have developed an approach that overcomes this problem. They showed that computers can first be trained on extremely simplified theoretical models before being set to learn on real-life systems. That means that when the computers start the machine-learning process on the real-life systems, they can draw on what they learned previously on the models. The computers can therefore get on the right path quickly without going through a period of extreme fluctuations. The engineers’ research has just been published in IEEE Transactions on Neural Networks and Learning Systems.

“It’s like learning the driver’s manual before you start a car,” says Pierre-Jean Alet, head of smart energy systems research at CSEM and a co-author of the study. “With this pre-training step, computers build up a knowledge base they can draw on so they aren’t flying blind as they search for the right answer.”

Slashing energy use by over 20%

The engineers tested their approach on a heating, ventilation and air conditioning (HVAC) system for a complex 100-room building using a three-step process. First, they trained a computer on a “virtual model” built from simple equations that roughly described the building’s behavior. Then they fed actual building data (temperature, how long blinds were open, weather conditions, etc.) into the computer, to make the training more accurate. Finally, they let the computer run its reinforcement-learning algorithms to find the best way to manage the HVAC system.

Broad applications

This discovery could open up new horizons for machine learning by expanding its use to applications where large fluctuations in operating parameters would have important financial or security costs.

Source: CSEM