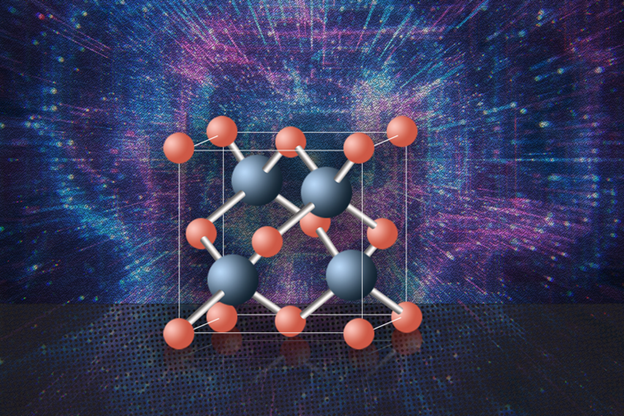

Artificial intelligence models use statistics and probability to produce scalable models from complex data. These computations take time and use energy.

Qilian Liang, an electrical engineering professor, has won a grant to focus on making artificial intelligence tech faster and more efficient, allowing real-time learning. He and his co-principal investigator Chenyun Pan intend to design deep-learning hardware accelerators to simplify AI model architecture.

They intend to simplify the architecture used to design hardware to increase computational speed. They will also create an algorithm to determine if AI implementation can cost less. Finally, they will develop more efficient circuits and hardware to allow faster computing and save money.

The team will focus on three types of deep-generative models:

- Vision transformer-based generative modeling uses a transformer architecture over patches of an image to improve image recognition. If AI can use environmental clues to determine what it is seeing rather than having to sort through many images, it will require less energy and time.

- Masked generative modeling hides data that is not valuable to the task at hand, lessening the amount of data that AI must sort through. Later, that masked data can be recovered and used to fill in gaps that could allow for earlier decision-making.

- Cross-modal generative modeling uses two kinds of models to simultaneously sort through multimodal data and identify what is useful and what is not.

Other fields can also use improvements in AI technology, such as robotics and autonomous driving.