IDTechEx attended AutoSens 2019 in Brussels. The event took place at the car museum in Brussels. This event is focused on all future vehicle perception technologies including lidar, radar, and camera. It focuses on the hardware side as well as on the software and data processing side.

This is an excellent event with a high quality of speakers drawn from established firms as well as start-up working to advance automotive perception technologies. In general, it is a technology focused event and is designed to be an event by engineers for engineers. The next version of AutoSens will be in Detroit on 12th to 14th May 2020. In this article, we summarize key learnings from talks pertaining to radars and camera systems. In another article, we summarized key learnings around lidar technologies.

We attended this event as part of our ongoing research in autonomous mobility as well as all associated perception technologies including lidars and radars. Our research offers scenario-based twenty-year forecasts, projecting the rise of various levels of autonomous vehicles. Our forecasts not only focus on unit sales but also offer a detailed price evolution projection segmented by all the key constituent technologies in an autonomous vehicle. Our research, furthermore, consider the impact of shared mobility on total global vehicle sales team, forecasting a peak car scenario.

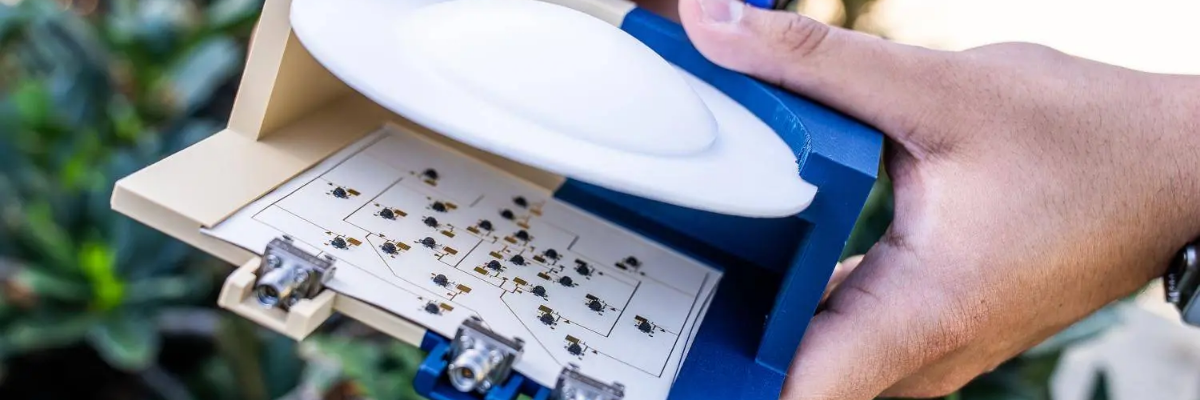

Furthermore, we have in-depth and comprehensive research on lidar and radar technologies. The report ‘Automotive Radar 2020-2040: Devices, Materials, Processing, AI, Markets, and Players’, develops a comprehensive technology roadmap, examining the technology at the levels of materials, semiconductor technologies, packaging techniques, antenna array, and signal processing.

It demonstrates how radar technology can evolve towards becoming a 4D imaging radar capable of providing a dense 4D point cloud that can enable object detection, classification, and tracking. The report builds a short- and long-term forecast models is segmented by the level of ADAS and autonomy.

Radar technology is already used in automotive to enable various ADAS functions. This technology is however changing fast. A shift is taking place towards smaller node CMOS or SOI chips, enabling higher monolithic function integration within the chip itself. The operational frequency is going higher with benefits in velocity and angular resolutions.

The bandwidth is widening, improving range resolution. Large antenna arrays are being developed, dramatically improving the virtual aperture size and angular resolution. The point cloud is also densifying, potentially enabling much more deep-learning AI. These trends will lead to 4D imaging radar which can be considered an alternative to lidars.

Arbe is an Israeli company that is at the forefront of some of these trends. It is unique in that it has set out to design its own chip and to develop its own algorithms for radar signal processing. Its chip will be made by Global Foundry on a 22nm depleted SOI technology (likely in Dresden Germany). The choice of 22nm is important. On the frontend, it allows boosting the frequency up to the required level. On the digital end, it enables the integration of sufficiently advanced modules.

The architecture has 48Tx and 48Rx. Here, most likely 4 (perhaps 5) transceiver chips are used. An in-house processor, likely on the same technology node, is designed to run the system and process the data to output a 4D point cloud. The IC will likely to be ready later this year. This radar aims to achieve 1deg and 2deg azimuth and elevation. The HVoF and VFoV are 30 and 100deg respectively. Range is suggested as 300m.

Arbe is unique in that it is not just focused on the hardware design. It is also focused on processing the data that comes off its advanced radar. Arbe is developing what it terms the arbenet. The very high-level representation of the arbenet is shown below. It might be that Arbe needs to do this to demonstrate what is possible with such radars. Thus, the hardware design will likely remain the core competency.

Arbe is working on clustering, generation of target list, tracking of moving objects, localization relative to static map, Doppler based classification, etc. It can use doppler gradients to measurement changes in orientation which might give hints about intention. It will do free-space mapping and use the radar for good ego-velocity estimates.

This year is likely to be a critical year for Arbe as many OEMs will make decisions about the sensor suite of choice for the next three to five years.

Imec presented an interesting radar development. They have developed a 145 GHz radar with a 10GHz bandwidth. This a single-chip 28nm CMOS SoC solution with antenna-on-a-chip implementation. It is thus truly a radar-in-a-chip solution. The large bandwidth and high frequency operation result in high range, velocity, and angular separation resolution.

The high mm-resolution can enable multiple applications. Imec demonstrated in-cabin driver monitoring. Here, this low-cluster environment includes background vibration, but the target is largely static. As the results below show, this radar can fairly accurately track the heartbeat and respiration rhythm of the driver, enabling remote monitoring. To get this result, additional algorithmic compensations are naturally required. This solution requires no background light and does not compromise privacy.

Imec has also proposed 3D gesture control using its compact (6.5mm2) single-chip solution. Here, the radar transmits omnidirectional and calculates the angle-of-arrival using the phase differences measured at its four different receive antennas. To make sense of the angle-of-arrival estimates, imec is training an algorithm on 25 people and a set of seven gestures. This technology – the software and the hardware together – can offer true 3D gesture recognition for consumer products.