With applications that range from computer gaming to facial recognition and aging software, Generative Application Networks (GANs) have a wide range of current and potential applications, whether it’s reconstructing 3D models from images or improving photos from space.

Using two networks, one that generates and one that discriminates, GANs work together to map and then, increasingly, to train data until it produces realistic synthetic images.

And that’s just what NVIDIA Research has done with GauGAN, which demo’d at the GPU Technology Conference. GauGAN’s generator produces images, presents them to its discriminator, and the generator then improves realism, adjusts style, seasons, and quality of light to produce impressively convincing images.

“It’s much easier to brainstorm designs with simple sketches, and this technology is able to convert sketches into highly realistic images,” said Bryan Catanzaro, vice president of applied deep learning research at NVIDIA. “It’s like a coloring book picture that describes where a tree is, where the sun is, where the sky is,” Catanzaro said. “And then the neural network is able to fill in all of the detail and texture, and the reflections, shadows and colors, based on what it has learned about real images.”

Here’s a video of how it works.

GauGAN was trained on a million images and has learned to fill in data based on acquired knowledge. By changing labels, a landscape image including a stand of trees can be transformed from summer to winter, with appropriate changes in leaf and ground imagery (grass to snow, for example).

Among the many potential applications for GauGAN, the ability to create virtual worlds and better and easily modifiable prototypes is valuable not only for game developers, but also for architects, urban planners, and landscape designers.

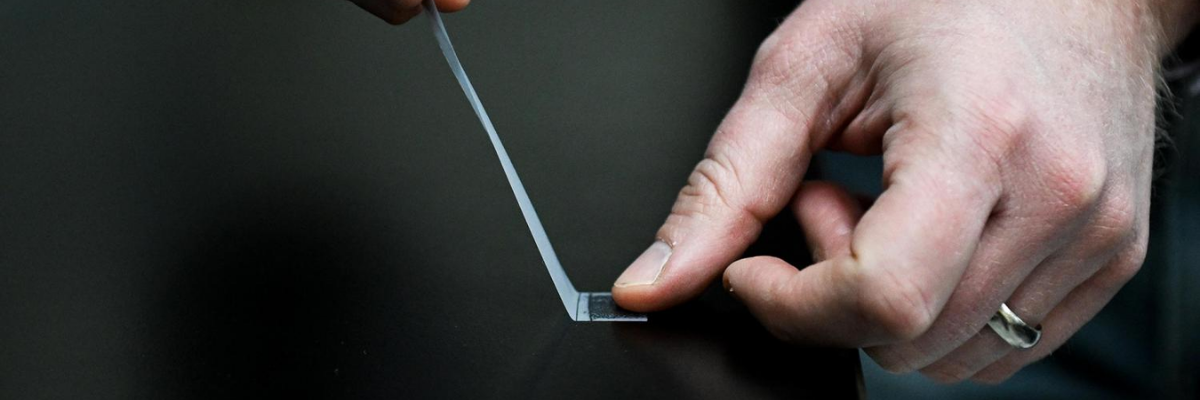

NVIDIA’s Catanzaro compared the GauGAN technology to a “smart paintbrush” that fills in details inside rough segmentation maps

Story via NVIDIA