University of Michigan researchers have tapped faint, latent signals from arm nerves and amplified them to enable real-time, intuitive, finger-level control of a robotic hand — the result being a major advance in mind-controlled prosthetics for amputees.

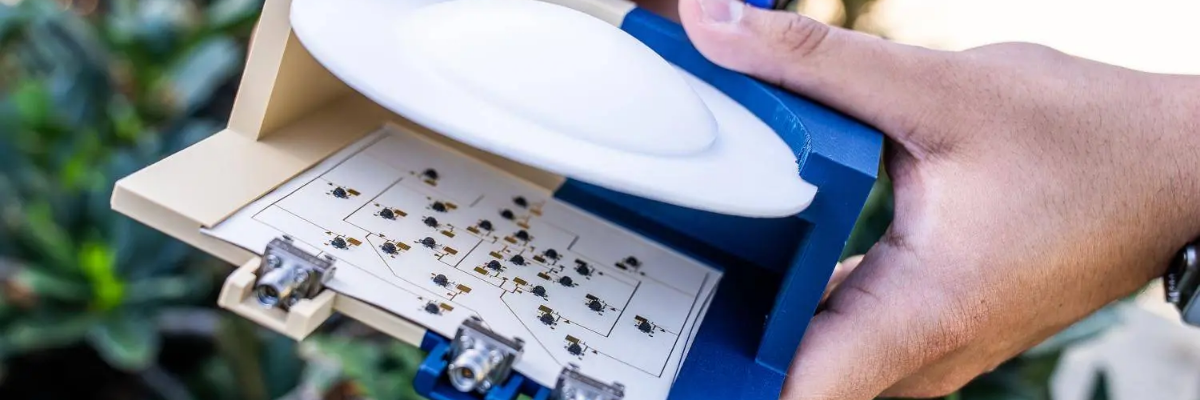

To achieve this, they developed a way to tame temperamental nerve endings, separate thick nerve bundles into smaller fibers that enable more precise control, and amplify the signals coming through those nerves. The approach involves tiny muscle grafts and machine learning algorithms borrowed from the brain-machine interface field.

“This is the biggest advance in motor control for people with amputations in many years,” said Paul Cederna, who is the Robert Oneal Collegiate Professor of Plastic Surgery at the U-M Medical School, as well as a professor of biomedical engineering.

“We have developed a technique to provide individual finger control of prosthetic devices using the nerves in a patient’s residual limb. With it, we have been able to provide some of the most advanced prosthetic control that the world has seen.”

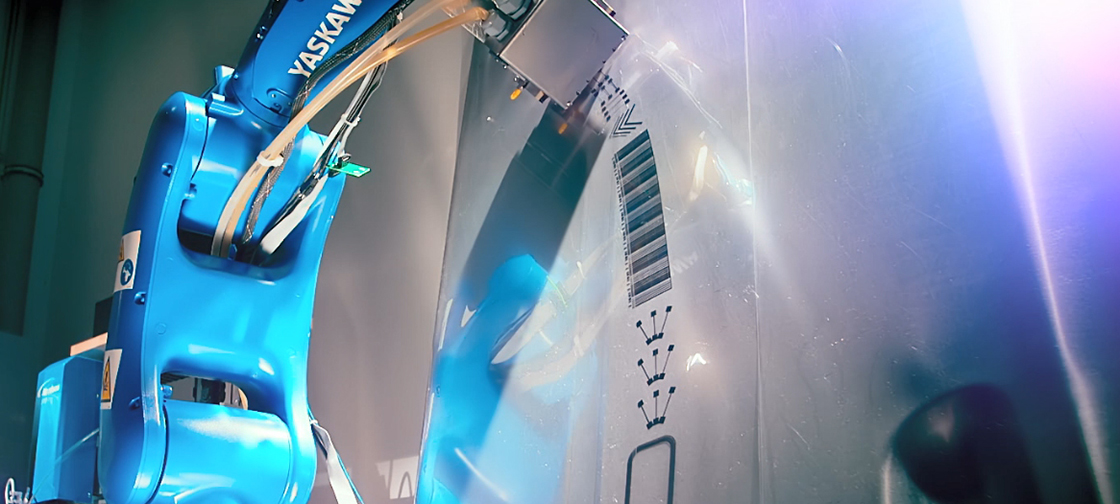

While study participants aren’t yet allowed to take the arm home, in the lab, they were able to pick up blocks with a pincer grasp; move their thumb in a continuous motion, rather than have to choose from two positions; lift spherically shaped objects; and even play in a version of Rock, Paper, Scissors called Rock, Paper, Pliers.

Watch this breakthrough:

Read more about this breakthrough at University of Michigan.