While many recent robotic advances have been based on the ways insects move, jump, and glide, a team of researchers from Newcastle University is now working with insects’ vision to improve visual perception in robots.

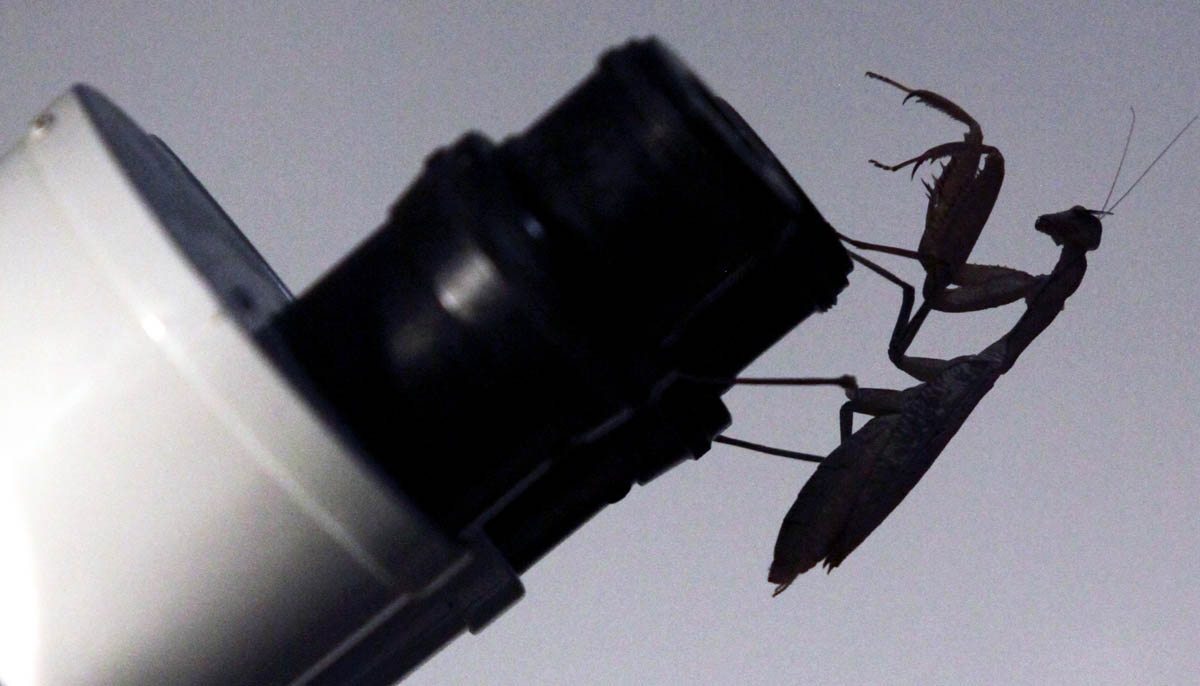

The team, led by Jenny Read, Professor of Vision Science, equipped a praying mantis with tiny 3D glasses attached with beeswax in order to learn how they perceive the world.

“Better understanding of their simpler processing systems helps us understand how 3D vision evolved, and could lead to possible new algorithms for 3D depth perception in computers,” said Read.

In the team’s experiments, the praying mantises short videos of simulated bugs moving around a computer screen. The mantises didn’t try to catch the bugs when they were in 2D. But when the bugs were shown in 3D, apparently floating in front of the screen, the mantises struck out at them, proving that they actually use 3D vision in their daily routines.

Initially, the team tried a widely-used contemporary 3D technology used for humans that involved circular polarization to separate the two eyes’ images , but this method didn’t work because the insects were so close to the screen that the glasses failed to separate the two eyes’ images correctly.

“When this system failed we looked at the old-style 3D glasses with red and blue lenses. Since red light is poorly visible to mantises, we used green and blue glasses and an LED monitor with unusually narrow output in the green and blue wavelength,” said Dr. Vivek Nityananda, sensory biologist at Newcastle University and part of the research team.

In their study, the team not only proved 3D vision in mantises, but also that this method could be used to deliver virtual 3D stimuli to insects. The team will now examine the algorithms used for depth perception in insects to better understand how human vision has evolved and then develop new ways of adding 3D technology to computers and robots.

Story via Newcastle University.