IBM (NYSE: IBM) today unveiled details of its upcoming IBM Telum Processor that will deliver deep learning inference to enterprise workloads to address fraud in real-time. Telum is IBM’s first processor to have on-chip acceleration for AI inferencing in real time. Applications include banking, finance, trading, insurance applications and customer interactions. Availability is planned for the first half of 2022.

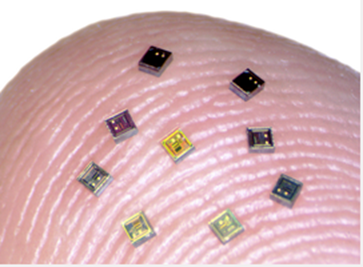

Each chip contains 8 processor cores with a deep super-scalar out-of-order instruction pipeline, running with more than 5GHz clock frequency, optimized for the demands of heterogenous enterprise class workloads. 32MB cache per core allows clients to scale up to 32 chips. The dual-chip module design contains 22 billion transistors and 19 miles of wire on 17 metal layers.

IBM Telum is designed to enable applications to run efficiently where the data resides, overcoming traditional enterprise AI approaches that require significant memory and data movement capabilities to handle inferencing. Here, the accelerator in close proximity to mission critical data and applications so that enterprises can conduct high volume inferencing for real time sensitive transactions without off-platform AI solutions.

Telum is the first IBM chip with technology created by the IBM Research AI Hardware Center. For more information please visit www.ibm.com/it-infrastructure/z/capabilities/real-time-analytics.

Original Release: PR Newswire